Writing Continuous Applications with Structured Streaming Python APIs in Apache Spark

- 1. https://dbricks.co/tutorial-pydata-miami 1 Enter your cluster name Use DBR 5.0 and Apache Spark 2.4, Scala 2.11 Choose Python 3 WiFi: CIC or CIC-A

- 2. Writing Continuous Applications with Structured Streaming in PySpark Jules S. Damji PyData, Miami, FL Jan 11, 2019

- 3. I have used Apache Spark 2.x Before…

- 4. Apache Spark Community & DeveloperAdvocate@ Databricks DeveloperAdvocate@ Hortonworks Software engineering @Sun Microsystems, Netscape, @Home, VeriSign, Scalix, Centrify, LoudCloud/Opsware, ProQuest Program Chair Spark + AI Summit https://www.linkedin.com/in/dmatrix @2twitme

- 5. DATABRICKS WORKSPACE Databricks Delta ML Frameworks DATABRICKS CLOUD SERVICE DATABRICKS RUNTIME Reliable & Scalable Simple & Integrated Databricks Unified Analytics Platform APIs Jobs Models Notebooks Dashboards End to end ML lifecycle

- 6. Agenda for Today’s Talk • What and Why Apache Spark • Why Streaming Applications are Difficult • What’s Structured Streaming • Anatomy of a Continunous Application • Tutorials & Demo • Q & A

- 7. How to think about data in 2019 - 2020 “Data is the new oil"

- 8. What’s Apache Spark & Why

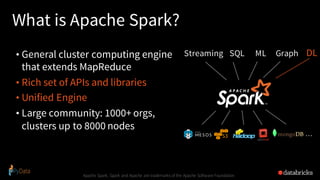

- 9. What is Apache Spark? • General cluster computing engine that extends MapReduce • Rich set of APIs and libraries • Unified Engine • Large community: 1000+ orgs, clusters up to 8000 nodes Apache Spark, Spark and Apache are trademarks of the Apache Software Foundation SQLStreaming ML Graph … DL

- 10. Unique Thing about Spark • Unification: same engine and same API for diverse use cases • Streaming, batch, or interactive • ETL, SQL, machine learning, or graph

- 11. Why Unification?

- 12. Why Unification? • MapReduce: a general engine for batch processing

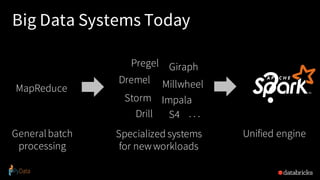

- 13. MapReduce Generalbatch processing Pregel Dremel Millwheel Drill Giraph ImpalaStorm S4 . . . Specialized systems for newworkloads Big Data Systems Yesterday Hard to manage, tune, deployHard to combine in pipelines

- 14. MapReduce Generalbatch processing Unified engine Big Data Systems Today ? Pregel Dremel Millwheel Drill Giraph ImpalaStorm S4 . . . Specialized systems for newworkloads

- 15. Faster, Easier to Use, Unified 15 First Distributed Processing Engine Specialized Data Processing Engines Unified Data Processing Engine

- 16. Benefits of Unification 1. Simpler to use and operate 2. Code reuse: e.g. only write monitoring, FT, etc once 3. New apps that span processing types: e.g. interactive queries on a stream, online machine learning

- 17. An Analogy Specialized devices Unified device New applications

- 18. Why Streaming Applications are Inherently Difficult?

- 20. Complexities in stream processing COMPLEX DATA Diverse data formats (json, avro, txt, csv, binary, …) Data can be dirty, late, out-of-order COMPLEX SYSTEMS Diverse storage systems (Kafka, S3, Kinesis, RDBMS, …) System failures COMPLEX WORKLOADS Combining streaming with interactive queries Machine learning

- 21. Structured Streaming stream processing on Spark SQL engine fast, scalable, fault-tolerant rich, unified, high level APIs deal with complex data and complex workloads rich ecosystem of data sources integrate with many storage systems

- 22. you should not have to reason about streaming

- 23. Treat Streams as Unbounded Tables 23 data stream unbounded inputtable newdata in the data stream = newrows appended to a unboundedtable

- 24. you should write queries & Apache Spark should continuously update the answer

- 25. DataFrames, Datasets, SQL input = spark.readStream .format("kafka") .option("subscribe", "topic") .load() result = input .select("device", "signal") .where("signal > 15") result.writeStream .format("parquet") .start("dest-path") Logical Plan Read from Kafka Project device, signal Filter signal > 15 Writeto Parquet Apache Spark automatically streamifies! Spark SQL converts batch-like query to a series of incremental execution plans operating on new batches of data Series of Incremental Execution Plans Kafka Source Optimized Operator codegen, off- heap, etc. Parquet Sink Optimized Physical Plan process newdata t = 1 t = 2 t = 3 process newdata process newdata

- 26. Structured Streaming – Processing Modes 26

- 27. Structured Streaming Processing Modes 27

- 29. Streaming word count Anatomy of a Streaming Query

- 30. Anatomy of a Streaming Query: Step 1 spark.readStream .format("kafka") .option("subscribe", "input") .load() . Source • Specify one or more locations to read data from • Built in support for Files/Kafka/Socket, pluggable.

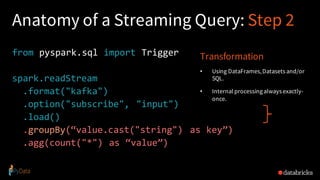

- 31. Anatomy of a Streaming Query: Step 2 from pyspark.sql import Trigger spark.readStream .format("kafka") .option("subscribe", "input") .load() .groupBy(“value.cast("string") as key”) .agg(count("*") as “value”) Transformation • Using DataFrames,Datasets and/or SQL. • Internal processingalways exactly- once.

- 32. Anatomy of a Streaming Query: Step 3 from pyspark.sql import Trigger spark.readStream .format("kafka") .option("subscribe", "input") .load() .groupBy(“value.cast("string") as key”) .agg(count("*") as “value”) .writeStream() .format("kafka") .option("topic", "output") .trigger("1 minute") .outputMode(OutputMode.Complete()) .option("checkpointLocation", "…") .start() Sink • Accepts the output of each batch. • When supported sinks are transactional and exactly once (Files).

- 33. Anatomy of a Streaming Query: Output Modes from pyspark.sql import Trigger spark.readStream .format("kafka") .option("subscribe", "input") .load() .groupBy(“value.cast("string") as key”) .agg(count("*") as 'value’) .writeStream() .format("kafka") .option("topic", "output") .trigger("1 minute") .outputMode("update") .option("checkpointLocation", "…") .start() Output mode – What's output • Complete – Output the whole answer every time • Update – Output changed rows • Append– Output new rowsonly Trigger – When to output • Specifiedas a time, eventually supportsdata size • No trigger means as fast as possible

- 34. Anatomy of a Streaming Query: Checkpoint from pyspark.sql import Trigger spark.readStream .format("kafka") .option("subscribe", "input") .load() .groupBy(“value.cast("string") as key”) .agg(count("*") as 'value) .writeStream() .format("kafka") .option("topic", "output") .trigger("1 minute") .outputMode("update") .option("checkpointLocation", "…") .withWatermark(“timestamp” “2 minutes”) .start() Checkpoint & Watermark • Tracks the progress of a query in persistent storage • Can be used to restart the query if there is a failure. • trigger( Trigger. Continunous(“ 1 second”)) Set checkpoint location & watermark to drop very late events

- 35. Fault-tolerance with Checkpointing Checkpointing – tracks progress (offsets) of consuming data from the source and intermediate state. Offsets and metadata saved as JSON Can resume after changing your streaming transformations end-to-end exactly-once guarantees process newdata t = 1 t = 2 t = 3 process newdata process newdata write ahead log

- 37. Traditional ETL • Raw, dirty, un/semi-structured is data dumped as files • Periodic jobs run every few hours to convert raw data to structured data ready for further analytics • Hours of delay before taking decisions on latest data • Problem: Unacceptable when time is of essence • [intrusion , anomaly or fraud detection,monitoringIoT devices, etc.] 37 file dump seconds hours table 10101010

- 38. Streaming ETL w/ Structured Streaming Structured Streaming enables raw data to be available as structured data as soon as possible 38 seconds table 10101010

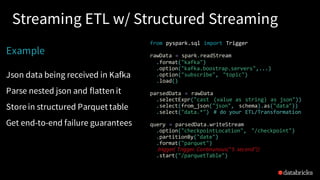

- 39. Streaming ETL w/ Structured Streaming Example Json data being received in Kafka Parse nested json and flatten it Store in structured Parquet table Get end-to-end failure guarantees from pyspark.sql import Trigger rawData = spark.readStream .format("kafka") .option("kafka.boostrap.servers",...) .option("subscribe", "topic") .load() parsedData = rawData .selectExpr("cast (value as string) as json")) .select(from_json("json", schema).as("data")) .select("data.*") # do your ETL/Transformation query = parsedData.writeStream .option("checkpointLocation", "/checkpoint") .partitionBy("date") .format("parquet") .trigger( Trigger. Continunous(“5 second”)) .start("/parquetTable")

- 40. Reading from Kafka raw_data_df = spark.readStream .format("kafka") .option("kafka.boostrap.servers",...) .option("subscribe", "topic") .load() rawData dataframe has the following columns key value topic partition offset timestamp [binary] [binary] "topicA" 0 345 1486087873 [binary] [binary] "topicB" 3 2890 1486086721

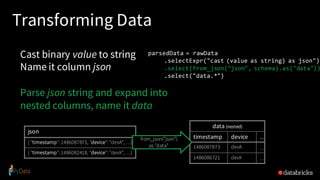

- 41. Transforming Data Cast binary value to string Name it column json Parse json string and expand into nested columns, name it data parsedData = rawData .selectExpr("cast (value as string) as json") .select(from_json("json", schema).as("data")) .select("data.*") json { "timestamp": 1486087873, "device": "devA", …} { "timestamp": 1486082418, "device": "devX", …} data (nested) timestamp device … 1486087873 devA … 1486086721 devX … from_json("json") as "data"

- 42. Transforming Data Cast binary value to string Name it column json Parse json string and expand into nested columns, name it data Flatten the nested columns parsedData = rawData .selectExpr("cast (value as string) as json") .select(from_json("json", schema).as("data")) .select("data.*") powerful built-in Python APIs to perform complex data transformations from_json, to_json, explode,... 100s offunctions (see our blogpost & tutorial)

- 43. Writing to Save parsed data as Parquet table in the given path Partition files by date so that future queries on time slices of data is fast e.g. query on last 48 hours of data query = parsedData.writeStream .option("checkpointLocation", ...) .partitionBy("date") .format("parquet") .start("/parquetTable") #pathname

- 44. Tutorials

- 45. Summary • Apache Spark best suited for unified analytics & processing at scale • Structured Streaming APIs Enables Continunous Applications • Demonstrated Continunous Application

- 46. Resources • Getting Started Guide with Apache Spark on Databricks • docs.databricks.com • Spark Programming Guide • Structured Streaming Programming Guide • Anthology of Technical Assets for Structured Streaming • Databricks Engineering Blogs • https://databricks.com/training/instructor-led-training

- 47. 15% Discount Code: PyDataMiami

- 48. Go to databricks.com/training Apache Spark Training from Databricks

![Traditional ETL

• Raw, dirty, un/semi-structured is data dumped as files

• Periodic jobs run every few hours to convert raw data to structured

data ready for further analytics

• Hours of delay before taking decisions on latest data

• Problem: Unacceptable when time is of essence

• [intrusion , anomaly or fraud detection,monitoringIoT devices, etc.]

37

file

dump

seconds hours

table

10101010](https://image.slidesharecdn.com/pysparkstructuredstreamingfinal-190112225329/85/Writing-Continuous-Applications-with-Structured-Streaming-Python-APIs-in-Apache-Spark-37-320.jpg)

![Reading from Kafka

raw_data_df = spark.readStream

.format("kafka")

.option("kafka.boostrap.servers",...)

.option("subscribe", "topic")

.load()

rawData dataframe has

the following columns

key value topic partition offset timestamp

[binary] [binary] "topicA" 0 345 1486087873

[binary] [binary] "topicB" 3 2890 1486086721](https://image.slidesharecdn.com/pysparkstructuredstreamingfinal-190112225329/85/Writing-Continuous-Applications-with-Structured-Streaming-Python-APIs-in-Apache-Spark-40-320.jpg)