Artificial neural networks: Supervised learning

- 1. Lecture 7 Artificial neural networks: Supervised learning ■ Introduction, or how the brain works ■ The neuron as a simple computing element ■ The perceptron ■ Multilayer neural networks ■ Accelerated learning in multilayer neural networks ■ The Hopfield network ■ Bidirectional associative memories (BAM) ■ Summary Negnevitsky, Pearson Education, 2011 1

- 2. Introduction, or how the brain works Negnevitsky, Pearson Education, 2011 2 Machine learning involves adaptive mechanisms that enable computers to learn from experience, learn by example and learn by analogy. Learning capabilities can improve the performance of an intelligent system over time. The most popular approaches to machine learning are artificial neural networks and genetic algorithms. This lecture is dedicated to neural networks.

- 3. ■ A neural network can be defined as a model of reasoning based on the human brain. The brain consists of a densely interconnected set of nerve cells, or basic information-processing units, called neurons. ■ The human brain incorporates nearly 10 billion neurons and 60 trillion connections, synapses, between them. By using multiple neurons simultaneously, the brain can perform its functions much faster than the fastest computers in existence today. Negnevitsky, Pearson Education, 2011 3

- 4. ■ Each neuron has a very simple structure, but an army of such elements constitutes a tremendous processing power. ■ A neuron consists of a cell body, soma, a number of fibers called dendrites, and a single long fiber called the axon. Negnevitsky, Pearson Education, 2011 4

- 5. ■ Our brain can be considered as a highly complex, non-linear and parallel information-processing system. ■ Information is stored and processed in a neural network simultaneously throughout the whole network, rather than at specific locations. In other words, in neural networks, both data and its processing are global rather than local. ■ Learning is a fundamental and essential characteristic of biological neural networks. The ease with which they can learn led to attempts to emulate a biological neural network in a computer. Negnevitsky, Pearson Education, 2011 5

- 6. ■ An artificial neural network consists of a number of very simple processors, also called neurons, which are analogous to the biological neurons in the brain. ■ The neurons are connected by weighted links passing signals from one neuron to another. ■ The output signal is transmitted through the neuron’s outgoing connection. The outgoing connection splits into a number of branches that transmit the same signal. The outgoing branches terminate at the incoming connections of other neurons in the network. Negnevitsky, Pearson Education, 2011 6

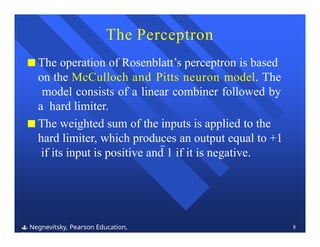

- 7. Can a single neuron learn a task? Negnevitsky, Pearson Education, 2011 7 ■ In 1958, Frank Rosenblatt introduced a training algorithm that provided the first procedure for training a simple ANN: a perceptron. ■ The perceptron is the simplest form of a neural network. It consists of a single neuron with adjustable synaptic weights and a hard limiter.

- 8. The Perceptron Negnevitsky, Pearson Education, 2011 8 ■ The operation of Rosenblatt’s perceptron is based on the McCulloch and Pitts neuron model. The model consists of a linear combiner followed by a hard limiter. ■ The weighted sum of the inputs is applied to the hard limiter, which produces an output equal to +1 if its input is positive and 1 if it is negative.

- 9. This is done by making small adjustments in the weights to reduce the difference between the actual and desired outputs of the perceptron. The initial weights are randomly assigned, usually in the range [0.5, 0.5], and then updated to obtain the output consistent with the training examples. Negnevitsky, Pearson Education, 2011 9 How does the perceptron learn its classification tasks?

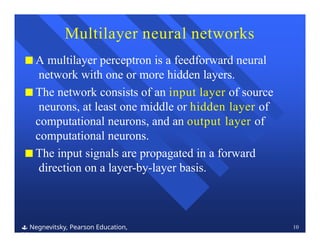

- 10. Multilayer neural networks Negnevitsky, Pearson Education, 2011 10 ■ A multilayer perceptron is a feedforward neural network with one or more hidden layers. ■ The network consists of an input layer of source neurons, at least one middle or hidden layer of computational neurons, and an output layer of computational neurons. ■ The input signals are propagated in a forward direction on a layer-by-layer basis.

- 11. What does the middle layer hide? Negnevitsky, Pearson Education, 2011 11 ■ A hidden layer “hides” its desired output. Neurons in the hidden layer cannot be observed through the input/output behaviour of the network. There is no obvious way to know what the desired output of the hidden layer should be. ■ Commercial ANNs incorporate three and sometimes four layers, including one or two hidden layers. Each layer can contain from 10 to 1000 neurons.Experimental neural networks may have five or even six layers, including three or four hidden layers, and utilise millions of neurons.

- 12. Back-propagation neural network Negnevitsky, Pearson Education, 2011 12 ■ Learning in a multilayer network proceeds the same way as for a perceptron. ■ A training set of input patterns is presented to the network. ■ The network computes its output pattern, and if there is an error or in other words a difference between actual and desired output patterns the weights are adjusted to reduce this error.

- 13. ■ In a back-propagation neural network, the learning algorithm has two phases. ■ First, a training input pattern is presented to the network input layer. The network propagates the input pattern from layer to layer until the output pattern is generated by the output layer. ■ If this pattern is different from the desired output, an error is calculated and then propagated backwards through the network from the output layer to the input layer. The weights are modified as the error is propagated. Negnevitsky, Pearson Education, 2011 13

- 14. Step 1: Initialisation Set all the weights and threshold levels of the network to random numbers uniformly distributed inside a small range: where Fi is the total number of inputs of neuron i in the network. The weight initialisation is done on a neuron-by-neuron basis. The back-propagation training algorithm Negnevitsky, Pearson Education, 2011 14

- 15. Step 2: Activation Activate the back-propagation neural network by applying inputs x1(p), x2(p),…, xn(p) and desired outputs yd,1(p), yd,2(p),…, yd,n(p). (a) Calculate the actual outputs of the neurons in the hidden layer: where n is the number of inputs of neuron j in the hidden layer, and sigmoid is the sigmoid activation function. Negnevitsky, Pearson Education, 2011 15

- 16. (b) Calculate the actual outputs of the neurons in the output layer: where m is the number of inputs of neuron k in the output layer. Step 2: Activation (continued) Negnevitsky, Pearson Education, 2011 16

- 17. Step 3: Weight training Update the weights in the back-propagation network propagating backward the errors associated with output neurons. (a) Calculate the error gradient for the neurons in the output layer: Calculate the weight corrections: Update the weights at the output neurons: Negnevitsky, Pearson Education, 2011 17

- 18. Calculate the weight corrections: Update the weights at the hidden neurons: Step 3: Weight training (continued) (b) Calculate the error gradient for the neurons in the hidden layer: Negnevitsky, Pearson Education, 2011 18

- 19. Step 4: Iteration Increase iteration p by one, go back to Step 2 and repeat the process until the selected error criterion is satisfied. As an example, we may consider the three-layer back-propagation network. Suppose that the network is required to perform logical operation Exclusive-OR. Recall that a single-layer perceptron could not do this operation. Now we will apply the three-layer net. Negnevitsky, Pearson Education, 2011 19

- 20. ■ The effect of the threshold applied to a neuron in the hidden or output layer is represented by its weight, , connected to a fixed input equal to 1. ■ The initial weights and threshold levels are set randomly as follows: w13 = 0.5, w14 = 0.9, w23 = 0.4, w24 = 1.0, w35 = 1.2, w45 = 1.1, 3 = 0.8, 4 = 0.1 and 5 = 0.3. Negnevitsky, Pearson Education, 2011 20

- 21. Accelerated learning in multilayer neural networks ■ A multilayer network learns much faster when the sigmoidal activation function is represented by a hyperbolic tangent: where a and b are constants. Suitable values for a and b are: a = 1.716 and b = 0.667 Negnevitsky, Pearson Education, 2011 21

- 22. ■ We also can accelerate training by including a momentum term in the delta rule: Negnevitsky, Pearson Education, 2011 22 where is a positive number (0 1) called the momentum constant. Typically, the momentum constant is set to 0.95. This equation is called the generalised delta rule.

- 23. Learning with adaptive learning rate To accelerate the convergence and yet avoid the danger of instability, we can apply two heuristics: Heuristic 1 If the change of the sum of squared errors has the same algebraic sign for several consequent epochs, then the learning rate parameter, , should be increased. Heuristic 2 If the algebraic sign of the change of the sum of squared errors alternates for several consequent epochs, then the learning rate parameter, , should be decreased. Negnevitsky, Pearson Education, 2011 23

- 24. ■ Adapting the learning rate requires some changes in the back-propagation algorithm. ■ If the sum of squared errors at the current epoch exceeds the previous value by more than a predefined ratio (typically 1.04), the learning rate parameter is decreased (typically by multiplying by 0.7) and new weights and thresholds are calculated. ■ If the error is less than the previous one, the learning rate is increased (typically by multiplying by 1.05). Negnevitsky, Pearson Education, 2011 24

- 25. ■ Neural networks were designed on analogy with the brain. The brain’s memory, however, works by association. For example, we can recognise a familiar face even in an unfamiliar environment within 100-200 ms. We can also recall a complete sensory experience, including sounds and scenes, when we hear only a few bars of music. The brain routinely associates one thing with another. Negnevitsky, Pearson Education, 2011 25 The Hopfield Network

- 26. ■ Multilayer neural networks trained with the back- propagation algorithm are used for pattern recognition problems. However, to emulate the human memory’s associative characteristics we need a different type of network: a recurrent neural network. ■ A recurrent neural network has feedback loops from its outputs to its inputs. The presence of such loops has a profound impact on the learning capability of the network. Negnevitsky, Pearson Education, 2011 26

- 27. ■ The stability of recurrent networks intrigued several researchers in the 1960s and 1970s. However, none was able to predict which network would be stable, and some researchers were pessimistic about finding a solution at all. The problem was solved only in 1982, when John Hopfield formulated the physical principle of storing information in a dynamically stable network. Negnevitsky, Pearson Education, 2011 27

- 28. ■ In the Hopfield network, synaptic weights between neurons are usually represented in matrix form as follows: Negnevitsky, Pearson Education, 2011 28 where M is the number of states to be memorised by the network, Ym is the n-dimensional binary vector, I is n n identity matrix, and superscript T denotes a matrix transposition.

- 29. ■ The remaining six states are all unstable. However, stable states (also called fundamental memories) are capable of attracting states that are close to them. ■ The fundamental memory (1, 1, 1) attracts unstable states (1, 1, 1), (1, 1, 1) and (1, 1, 1). Each of these unstable states represents a single error, compared to the fundamental memory (1, 1, 1). ■ The fundamental memory (1, 1, 1) attracts unstable states (1, 1, 1), (1, 1, 1) and (1, 1, 1). ■ Thus, the Hopfield network can act as an error correction network. Negnevitsky, Pearson Education, 2011 29

- 30. ■ Storage capacity is or the largest number of fundamental memories that can be stored and retrieved correctly. ■ The maximum number of fundamental memories Mmax that can be stored in the n-neuron recurrent network is limited by Negnevitsky, Pearson Education, 2011 30 Storage capacity of the Hopfield network

- 31. ■ The Hopfield network represents an autoassociative type of memory it can retrieve a corrupted or incomplete memory but cannot associate this memory with another different memory. ■ Human memory is essentially associative. One thing may remind us of another, and that of another, and so on. We use a chain of mental associations to recover a lost memory. If we forget where we left an umbrella, we try to recall where we last had it, what we were doing, and who we were talking to. We attempt to establish a chain of associations, and thereby to restore a lost memory. Negnevitsky, Pearson Education, 2011 31 Bidirectional associative memory (BAM)

- 32. ■ To associate one memory with another, we need a recurrent neural network capable of accepting an input pattern on one set of neurons and producing a related, but different, output pattern on another set of neurons. ■ Bidirectional associative memory (BAM), first proposed by Bart Kosko, is a heteroassociative network. It associates patterns from one set, set A, to patterns from another set, set B, and vice versa. Like a Hopfield network, the BAM can generalise and also produce correct outputs despite corrupted or incomplete inputs. Negnevitsky, Pearson Education, 2011 32

- 33. The basic idea behind the BAM is to store pattern pairs so that when n-dimensional vector X from set A is presented as input, the BAM recalls m-dimensional vector Y from set B, but when Y is presented as input, the BAM recalls X. Negnevitsky, Pearson Education, 2011 33

- 34. ■ To develop the BAM, we need to create a correlation matrix for each pattern pair we want to store. The correlation matrix is the matrix product of the input vector X, and the transpose of the output vector YT. The BAM weight matrix is the sum of all correlation matrices, that is, Negnevitsky, Pearson Education, 2011 34 where M is the number of pattern pairs to be stored in the BAM.

- 35. ■ The BAM is unconditionally stable. This means that any set of associations can be learned without risk of instability. ■ The maximum number of associations to be stored in the BAM should not exceed the number of neurons in the smaller layer. ■ The more serious problem with the BAM is incorrect convergence. The BAM may not always produce the closest association. In fact, a stable association may be only slightly related to the initial input vector. Negnevitsky, Pearson Education, 2011 35 Stability and storage capacity of the BAM

![This is done by making small adjustments in the

weights to reduce the difference between the actual

and desired outputs of the perceptron. The initial

weights are randomly assigned, usually in the range

[0.5, 0.5], and then updated to obtain the output

consistent with the training examples.

Negnevitsky, Pearson Education,

2011

9

How does the perceptron learn its

classification tasks?](https://image.slidesharecdn.com/lecture7-240919063407-c78380bb/85/Artificial-neural-networks-Supervised-learning-9-320.jpg)