Classification using back propagation algorithm

- 2. Backpropagation Algorithms The back-propagation learning algorithm is one of the most important developments in neural networks. Backpropagation is the generalization of the Widrow-Hoff learning rule to multiple-layer networks and nonlinear differentiable transfer functions. This learning algorithm is applied to multilayer feed-forward networks consisting of processing elements with continuous differentiable activation functions. The networks associated with back-propagation algorithm are also called back-propagation networks(BPNs).

- 3. Backpropagation Algorithms The Aim Of The Neural Network Is To Train The Net Ot Achieve A Balance Between The Net’s Ability To Respond(memorization) And Its Ability To Give Resasonable Responses To The Input That Is Similar But Not Identical To The One That Is Use In Trianing (Generalization).

- 4. Architecture This section presents the architecture of the network that is most commonly used with the backpropagation algorithm – the multilayer feedforward network

- 5. Architecture Feedforward Network Feedforward networks often have one or more hidden layers of sigmoid neurons followed by an output layer of linear neurons. Multiple layers of neurons with nonlinear transfer functions allow the network to learn nonlinear and linear relationships between input and output vectors. The linear output layer lets the network produce values outside the range -1 to +1. On the other hand, if you want to constrain the outputs of a network (such as between 0 and 1), then the output layer should use a sigmoid transfer function (such as logsig).

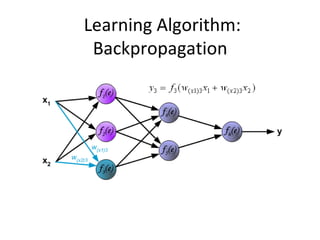

- 6. Learning Algorithm: Backpropagation The following slides describes teaching process of multi-layer neural network employing backpropagation algorithm. To illustrate this process the three layer neural network with two inputs and one output,which is shown in the picture below, is used:

- 7. Learning Algorithm: Backpropagation Each neuron is composed of two units. First unit adds products of weights coefficients and input signals. The second unit realise nonlinear function, called neuron transfer (activation) function. Signal e is adder output signal, and y = f(e) is output signal of nonlinear element. Signal y is also output signal of neuron.

- 8. Learning Algorithm: Backpropagation To teach the neural network we need training data set. The training data set consists of input signals (x1 and x2 ) assigned with corresponding target (desired output) z. The network training is an iterative process. In each iteration weights coefficients of nodes are modified using new data from training data set. Modification is calculated using algorithm described below: Each teaching step starts with forcing both input signals from training set. After this stage we can determine output signals values for each neuron in each network layer.

- 9. Learning Algorithm: Backpropagation Pictures below illustrate how signal is propagating through the network, Symbols w(xm)n represent weights of connections between network input xm and neuron n in input layer. Symbols yn represents output signal of neuron n.

- 12. Learning Algorithm: Backpropagation Propagation of signals through the hidden layer. Symbols wmn represent weights of connections between output of neuron m and input of neuron n in the next layer.

- 15. Learning Algorithm: Backpropagation Propagation of signals through the output layer.

- 16. Learning Algorithm: Backpropagation In the next algorithm step the output signal of the network y is compared with the desired output value (the target), which is found in training data set. The difference is called error signal d of output layer neuron

- 17. Learning Algorithm: Backpropagation The idea is to propagate error signal d (computed in single teaching step) back to all neurons, which output signals were input for discussed neuron.

- 18. Learning Algorithm: Backpropagation The idea is to propagate error signal d (computed in single teaching step) back to all neurons, which output signals were input for discussed neuron.

- 19. Learning Algorithm: Backpropagation The weights' coefficients wmn used to propagate errors back are equal to this used during computing output value. Only the direction of data flow is changed (signals are propagated from output to inputs one after the other). This technique is used for all network layers. If propagated errors came from few neurons they are added. The illustration is below:

- 20. Learning Algorithm: Backpropagation When the error signal for each neuron is computed, the weights coefficients of each neuron input node may be modified. In formulas below df(e)/de represents derivative of neuron activation function (which weights are modified).

- 21. Learning Algorithm: Backpropagation When the error signal for each neuron is computed, the weights coefficients of each neuron input node may be modified. In formulas below df(e)/de represents derivative of neuron activation function (which weights are modified).

- 22. Learning Algorithm: Backpropagation When the error signal for each neuron is computed, the weights coefficients of each neuron input node may be modified. In formulas below df(e)/de represents derivative of neuron activation function (which weights are modified).

- 23. Backpropagation applications They have been successful on a wide array of real-world data, including handwritten character recognition, pathology and laboratory medicine, and training a computer to pronounce English text.

- 24. Backpropagation terminologies EPOCH EPOCHUPDATION TERMINATING CONDITION