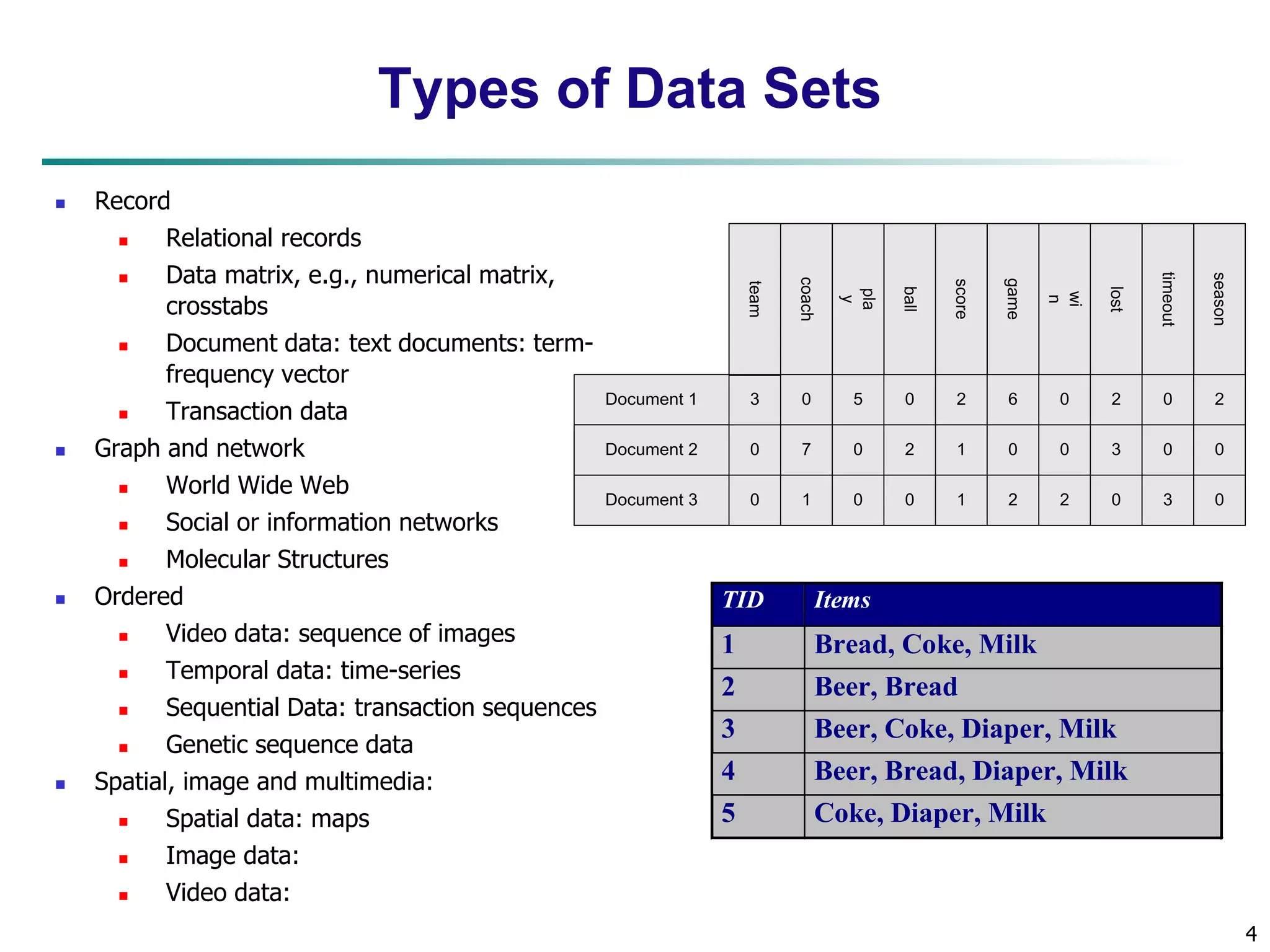

Chapter 2 of 'Data Mining: Concepts and Techniques' covers understanding data through various concepts including data objects, attribute types, statistical descriptions, and data visualization. It explains different types of data sets and provides insights on measuring data similarity and dissimilarity, as well as the importance of data visualization in identifying patterns and relationships. The chapter emphasizes the characteristics of structured data, the significance of attributes, and the methods used to visualize and analyze data effectively.

![15

Measuring the Dispersion of Data

Quartiles, outliers and boxplots

Quartiles: Q1 (25th percentile), Q3 (75th percentile)

Inter-quartile range: IQR = Q3 –Q1

Five number summary: min, Q1, median,Q3, max

Boxplot: ends of the box are the quartiles; median is marked; add whiskers,

and plot outliers individually

Outlier: usually, a value higher/lower than 1.5 x IQR

Variance and standard deviation (sample: s, population: σ)

Variance: (algebraic, scalable computation)

2 2

2 2 ( ) ]

i i

2 2 1

Standard deviation s (or σ) is the square root of variance s2 (or σ2)

n

i

n

i

n

i

i x

n

x

n

x x

n

s

1 1

1

1

[

1

1

( )

1

1

n

i

i

n

i

i x

N

x

N 1

2 2

1

( )

1

](https://image.slidesharecdn.com/02data-140913211051-phpapp02/75/Data-Mining-Concepts-and-Techniques-Chapter-2-15-2048.jpg)

![33

Scatterplot Matrices

Used by ermission of M. Ward, Worcester Polytechnic Institute

Matrix of scatterplots (x-y-diagrams) of the k-dim. data [total of (k2/2-k) scatterplots]](https://image.slidesharecdn.com/02data-140913211051-phpapp02/75/Data-Mining-Concepts-and-Techniques-Chapter-2-33-2048.jpg)

![35

Parallel Coordinates

n equidistant axes which are parallel to one of the screen axes and

The axes are scaled to the [minimum, maximum]: range of the

Every data item corresponds to a polygonal line which intersects each of the

axes at the point which corresponds to the value for the attribute

• • •

correspond to the attributes

corresponding attribute

Attr. 1 Attr . 2 Attr. 3 Attr. k](https://image.slidesharecdn.com/02data-140913211051-phpapp02/75/Data-Mining-Concepts-and-Techniques-Chapter-2-35-2048.jpg)

![49

Similarity and Dissimilarity

Similarity

Numerical measure of how alike two data objects are

Value is higher when objects are more alike

Often falls in the range [0,1]

Dissimilarity (e.g., distance)

Numerical measure of how different two data objects are

Lower when objects are more alike

Minimum dissimilarity is often 0

Upper limit varies

Proximity refers to a similarity or dissimilarity](https://image.slidesharecdn.com/02data-140913211051-phpapp02/75/Data-Mining-Concepts-and-Techniques-Chapter-2-49-2048.jpg)

![59

Ordinal Variables

An ordinal variable can be discrete or continuous

Order is important, e.g., rank

Can be treated like interval-scaled

replace xif by their rank

{1,..., } if f r M

map the range of each variable onto [0, 1] by replacing i-th

object in the f-th variable by

1

1

f

r

if

z

if M

compute the dissimilarity using methods for interval-scaled

variables](https://image.slidesharecdn.com/02data-140913211051-phpapp02/75/Data-Mining-Concepts-and-Techniques-Chapter-2-59-2048.jpg)