Introducing Apache Carbon Data - Hadoop Native Columnar Data Format

- 1. HUAWEI TECHNOLOGIES CO., LTD. CarbonData : A New Hadoop File Format For Faster Data Analysis

- 2. 2 Outline Use Case & Motivation : Why introducing a new file format? CarbonData File Format Deep Dive Framework Integrated with CarbonData Performance Demo Future Plan

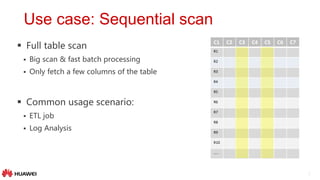

- 3. 3 Full table scan Big scan & fast batch processing Only fetch a few columns of the table Common usage scenario: ETL job Log Analysis Use case: Sequential scan C1 C2 C3 C4 C5 C6 C7 R1 R2 R3 R4 R5 R6 R7 R8 R9 R10 …..

- 4. 4 Multi-dimensional data analysis Involves aggregation / join Roll-up, Drill-down, Slicing and Dicing Low-latency ad-hoc query Common usage scenario: Dash-board reporting Fraud & Ad-hoc Analysis Use case: OLAP-Style Query C1 C2 C3 C4 C5 C6 C7 R1 R2 R3 R4 R5 R6 R7 R8 R9 R10 R11

- 5. 5 Predicate filtering on range of columns Full row keys or range of keys lookup Narrow scan but might fetch all columns Requires second/sub-second level low-latency Common usage scenario: Operational query User profiling Use case: Random Access C1 C2 C3 C4 C5 C6 C7 R1 R2 R3 R4 R5 R6 R7 R8 R9 R10 ……

- 6. 6 Motivation Random Access (narrow scan) Sequential Access (big scan) OLAP Style Query (multi-dimensional analysis) CarbonData: A Single File Format suits for different types of access

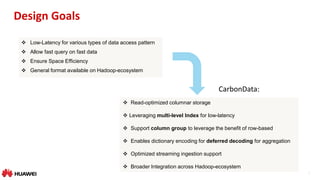

- 7. 7 Design Goals Low-Latency for various types of data access pattern Allow fast query on fast data Ensure Space Efficiency General format available on Hadoop-ecosystem Read-optimized columnar storage Leveraging multi-level Index for low-latency Support column group to leverage the benefit of row-based Enables dictionary encoding for deferred decoding for aggregation Optimized streaming ingestion support Broader Integration across Hadoop-ecosystem CarbonData:

- 8. 8 Outline Use cases & Motivation: Why introducing a new file format? CarbonData File Format Deep Dive Framework Integrated with CarbonData Performance Demo Future Plan

- 9. 9 Carbon File CarbonData File Structure Blocklet : A set of rows in columnar format Default blocklet size: ~120k rows Balance between efficient scan and compression Column chunk : Data for one column/column group in a Blocklet Allow multiple columns forms a column group & stored as row-based Column data stored as sorted index Footer : Metadata information File level metadata & statistics Schema Blocklet Index & Blocklet level Metadata Blocklet 1 Col1 Chunk Col2 Chunk … Colgroup1 Chunk Colgroup2 Chunk … Blocklet N … Footer

- 10. 10 Carbon Data File Blocklet 1 Column 1 Chunk Column 2 Chunk … ColumnGroup 1 Chunk ColumnGroup 2 Chunk … Blocklet N File Footer Blocklet Index Blocklet 1 Index Node •Minmax index: min, max •Multi-dimensional index: startKey, endKey Blocklet N Index Node … … Blocklet Info Blocklet 1 Info Blocklet N Info •Column 1 Chunk Info •Compression scheme •ColumnFormat •ColumnID list •ColumnChunk length •ColumnChunk offset … File Metadata Version, No. Row, … Segment Info Schema Schema for each column Blocklet Index Blocklet Info ColumnGroup1 Chunk Info … … Format

- 11. 11 Years Quarters Months Territory Country Quantity Sales 2003 QTR1 Jan EMEA Germany 142 11,432 2003 QTR1 Jan APAC China 541 54,702 2003 QTR1 Jan EMEA Spain 443 44,622 2003 QTR1 Feb EMEA Denmark 545 58,871 2003 QTR1 Feb EMEA Italy 675 56,181 2003 QTR1 Mar APAC India 52 9,749 2003 QTR1 Mar EMEA UK 570 51,018 2003 QTR1 Mar Japan Japan 561 55,245 2003 QTR2 Apr APAC Australia 525 50,398 2003 QTR2 Apr EMEA Germany 144 11,532 [1,1,1,1,1] : [142,11432] [1,1,1,3,2] : [541,54702] [1,1,1,1,3] : [443,44622] [1,1,2,1,4] : [545,58871] [1,1,2,1,5] : [675,56181] [1,1,3,3,6] : [52,9749] [1,1,3,1,7] : [570,51018] [1,1,3,2,8] : [561,55245] [1,2,4,3,9] : [525,50398] [1,2,4,1,1] : [144,11532] Blocklet • Data are sorted along MDK (multi-dimensional keys) • data stored as index in columnar format Encoding Blocklet Logical View Sort (MDK Index) [1,1,1,1,1] : [142,11432] [1,1,1,1,3] : [443,44622] [1,1,1,3,2] : [541,54702] [1,1,2,1,4] : [545,58871] [1,1,2,1,5] : [675,56181] [1,1,3,1,7] : [570,51018] [1,1,3,2,8] : [561,55245] [1,1,3,3,6] : [52,9749] [1,2,4,1,1] : [144,11532] [1,2,4,3,9] : [525,50398] Sorted MDK Index 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 2 2 1 1 1 2 2 3 3 3 4 4 1 1 3 1 1 1 2 3 1 3 142 443 541 545 675 570 561 52 144 525 11432 44622 54702 58871 56181 51018 55245 9749 11532 50398 C1 C2 C3 C4 C5 C6 C7 1 3 2 4 5 7 8 6 1 9

- 12. 12 File Level Blocklet Index Block 1 1 1 1 1 1 1 12000 1 1 1 2 1 2 5000 1 1 2 1 1 1 12000 1 1 2 2 1 2 5000 1 1 3 1 1 1 12000 1 1 3 2 1 2 5000 Block 2 1 2 1 3 2 3 11000 1 2 2 3 2 3 11000 1 2 3 3 2 3 11000 1 3 1 4 3 4 2000 1 3 1 5 3 4 1000 1 3 2 4 3 4 2000 Block 3 1 3 2 5 3 4 1000 1 3 3 4 3 4 2000 1 3 3 5 3 4 1000 1 4 1 4 1 1 20000 1 4 2 4 1 1 20000 1 4 3 4 1 1 20000 Block 4 2 1 1 1 1 1 12000 2 1 1 2 1 2 5000 2 1 2 1 1 1 12000 2 1 2 2 1 2 5000 2 1 3 1 1 1 12000 2 1 3 2 1 2 5000 Blocklet Index Block1 Start Key1 End Key1 Start Key1 End Key4 Start Key1 End Key2 Start Key3 End Key4 Start Key1 End Key1 Start Key2 End Key2 Start Key3 End Key3 Start Key4 End Key4 File FooterBlocklet • Build in-memory file level MDK index tree for filtering • Major optimization for efficient scan C1(Min, Max) …. C7(Min, Max) Block4 Start Key4 End Key4 C1(Min, Max) …. C7(Min, Max) C1(Min,Max) … C7(Min,Max) C1(Min,Max) … C7(Min,Max) C1(Min,Max) … C7(Min,Max) C1(Min,Max) … C7(Min,Max)

- 13. 13 Blocklet Rows [1|1] :[1|1] :[1|1] :[1|1] :[1|1] : [142]:[11432] [1|2] :[1|2] :[1|2] :[1|2] :[1|9] : [443]:[44622] [1|3] :[1|3] :[1|3] :[1|4] :[2|3] : [541]:[54702] [1|4] :[1|4] :[2|4] :[1|5] :[3|2] : [545]:[58871] [1|5] :[1|5] :[2|5] :[1|6] :[4|4] : [675]:[56181] [1|6] :[1|6] :[3|6] :[1|9] :[5|5] : [570]:[51018] [1|7] :[1|7] :[3|7] :[2|7] :[6|8] : [561]:[55245] [1|8] :[1|8] :[3|8] :[3|3] :[7|6] : [52]:[9749] [1|9] :[2|9] :[4|9] :[3|8] :[8|7] : [144]:[11532] [1|10]:[2|10]:[4|10]:[3|10] :[9|10] : [525]:[50398] Blocklet ( sort column within column chunk) Run Length Encoding & Compression Dim1 Block 1(1-10) Dim2 Block 1(1-8) 2(9-10) Dim3 Block 1(1-3) 2(4-5) 3(6-8) 4(9-10) Dim4 Block 1(1-2,4-6,9) 2(7) 3(3,8,10) Measure1 Block Measure2 Block Dim5 Block 1(1,9) 2(3) 3(2) 4(4) 5(5) 6(8) 7(6) 8(7) 9(10) Columnar Store Column chunk Level inverted Index [142]:[11432] [443]:[44622] [541]:[54702] [545]:[58871] [675]:[56181] [570]:[51018] [561]:[55245] [52]:[9749] [144]:[11532] [525]:[50398] Column Chunk Inverted Index • Optionally store column data as inverted index within column chunk • suitable to low cardinality column • better compression & fast predicate filtering Blocklet Physical View 1 10 142 443 541 545 675 570 561 52 144 525 11432 44622 54702 58871 56181 51018 55245 9749 11532 50398 C1 d r d r d r d r d r d r 1 10 1 8 2 2 1 10 1 3 2 2 3 3 4 2 1 10 1 6 2 1 3 3 1 2 4 3 9 1 7 1 3 1 … 1 2 2 1 3 1 4 1 5 1 … 1 1 9 1 3 1 2 1 4 1 … C2 C3 C4 C5 C6 C7

- 14. 14 10 2 23 23 38 15.2 10 2 50 15 29 18.5 10 3 51 18 52 22.8 11 6 60 29 16 32.9 12 8 68 32 18 21.6 Blocklet 1 C1 C2 C3 C4 C6C5 Col Chunk Col Chunk Col Chunk Col Chunk Column Group • Allow multiple columns form a column group • stored as a single column chunk in row- based format • suitable to set of columns frequently fetched together • saving stitching cost for reconstructing row Col Chunk

- 15. 15 Nested Data Type Representation • Represented as a composite of two columns • One column for the element value • One column for start_index & length of Array Arrays • Represented as a composite of finite number of columns • Each struct element is a separate column Struts Name Array<Ph_Number> John [192,191] Sam [121,345,333] Bob [198,787] Name Array [start,len] Ph_Number John 0,2 192 Sam 2,3 191 Bob 5,2 121 345 333 198 787 Name Info Strut<age,gender> John [31,M] Sam [45,F] Bob [16,M] Name Info.age Info.gender John 31 M Sam 45 F Bob 16 M

- 16. 16 Encoding & Compression • Efficient encoding scheme supported: • DELTA, RLE, BIT_PACKED • Dictionary: • medium high cardinality: file level dictionary • very low cardinality: table level global dictionary • CUSTOM • Compression Scheme: Snappy •Speedup Aggregation •Reduce run-time memory footprint •Enable deferred decoding •Enable fast distinct count Big Win:

- 17. 17 Outline Use Case & Motivation: Why introducing a new file format? CarbonData File Format Deep Dive Framework Integrated with CarbonData Performance Demo Future Plan

- 18. 18 CarbonData Modules Carbon-format Carbon-core Reader/Writer Thrift definition Carbon-Spark Integration Carbon-Hadoop Input/Output Format Language Agnostic Format Specification Core component of format implementation for reading/writing Carbon data Provide Hadoop Input/Output Format interface Integration of Carbon with Spark including query optimization

- 19. 19 Spark Integration • Query CarbonData Table • DataFrame API • Spark SQL Statement • Support schema evolution of Carbon table via ALTER TABLE • Add, Delete or Rename Column • schema update only, data stored on disk is untouched CREATE TABLE [IF NOT EXISTS] [db_name.]table_name [(col_name data_type [COMMENT col_comment], ...)] [COMMENT table_comment] [PARTITIONED BY (col_name data_type [COMMENT col_comment], ...)] STORED BY ‘org.carbondata.hive.CarbonHanlder’ [TBLPROPERTIES (property_name=property_value, ...)] [AS select_statement];

- 20. 20 Blocklet Spark Integration Table Block Footer + Index Blocklet Blocklet … … C1 C2 C3 C4 C5 C6 C7 C9 Table Level MDK Tree Index Inverted Index • Query optimization • Vectorized record reading • Predicate push down by leveraging multi-level index • Column Pruning • Defer decoding for aggregation Block Blocklet Blocklet Footer + Index Block Footer + Index Blocklet Blocklet Block Blocklet Blocklet Footer + Index

- 21. 21 Data Ingestion • Bulk Data Ingestion • CSV file conversion • MDK clustering level: load level vs. node level • Save Spark dataframe as Carbon data file df.write .format("org.apache.spark.CarbonSource") .options(Map("dbName" -> "db1", "tableName" -> "tbl1")) .mode(SaveMode.Overwrite) .save(“/path”) LOAD DATA [LOCAL] INPATH 'folder path' [OVERWRITE] INTO TABLE tablename OPTIONS(property_name=property_value, ...) INSERT INTO TABLE tablennme AS select_statement1 FROM table1;

- 22. 22 Data Compaction • Data compaction is used to merge small files • Re-clustering across loads • Two types of compactions - Minor compaction • Compact adjacent files into a single big file (~HDFS block size) - Major compaction • Reorganize adjacent loads to achieve better clustering along MDK index

- 23. 23 Outline Use Case & Motivation: Why introducing a new file format? CarbonData File Format Deep Dive Framework Integrated with CarbonData Performance Demo Future Plan

- 24. 24 26.28 12.71 9.82 10.38 11.21 23.05 17.33 15.49 17.82 24.64 107.39 101.62 111.86 9.45 4.41 1.62 2.54 8.16 0.89 0.55 0.52 0.54 1.19 0.16 2.24 4.28 0.00 20.00 40.00 60.00 80.00 100.00 120.00 SQL1 SQL2 SQL3 SQL4 SQL5 SQL6 SQL7 SQL8 SQL9 SQL10 SQL11 SQL12 SQL13 ResponseTime(Seconds) Benchmark Queries Carbon vs Popular Columnar Stores Popular Columnar Stores Carbon Performance comparison High Throughput/Full Scan Query OLAP/Interactive Query Random Access Query Data Size : 2TB 1.4x to 6x faster 20x – 33x faster 26x – 688x faster

- 25. 25 Performance comparison - Observations High Throughput/Full Scan Query 1.4 to 6 times faster Deferred decoding enables faster aggregation on the fly. OLAP/Interactive Query 20 to 33 times faster MDK, Min-Max and Inverted indices enable block pruning Deferred decoding enables faster aggregation on the fly. Random Access Query 26 to 688 times faster Inverted index enables faster row reconstruction. Column group eliminates implicit joins for row reconstruction.

- 26. 26 Outline Motivation: Why introducing a new file format? CarbonData File Format Deep Dive Framework Integrated with CarbonData Performance Demo Future Plan

- 27. 27 Live Demo Demo Environment Number of Nodes 5 VM (AWS r3.4xlarge) vCPU 80 (16/node) Memory 500 GiB (100 GiB/node) #Columns 300 Data Size 600GB #Records 300M High Throughput/Full Scan Query SELECT PROD_BRAND_NAME, SUM(STR_ORD_QTY) FROM oscon_demo GROUP BY PROD_BRAND_NAME; OLAP/Interactive query SELECT PROD_COLOR, SUM(STR_ORD_QTY) FROM oscon_demo WHERE CUST_COUNTRY ='New Zealand' AND CUST_CITY = 'Auckland' AND PRODUCT_NAME = 'Huawei Honor 4X' GROUP BY PROD_COLOR; Random Access Query SELECT * FROM oscon_demo WHERE CUST_PRFRD_FLG= "Y" AND PROD_BRAND_NAME = "Huawei" AND PROD_COLOR = "BLACK" AND CUST_LAST_RVW_DATE = "2015-12-11 00:00:00" AND CUST_COUNTRY ='New Zealand' AND CUST_CITY = 'Auckland' AND PRODUCT_NAME = 'Huawei Honor 4X' ;

- 28. 28 Outline Motivation: Why introducing a new file format? CarbonData File Format Deep Dive Framework Integrated with CarbonData Performance Demo Future Plan

- 29. 29 Future Plan • Upgrade to Spark 2.0 • Add append support • Support pre-aggregated table • Enable offline IUD support • Broader Integration across Hadoop-ecosystem

- 30. 30 Community • CarbonData is open sourced & will become Apache Incubator project • Welcome contribution to our Github @: https://github.com/HuaweiBigData/carbondata • Main Contributors: • Jihong MA, Vimal, Raghu, Ramana, Ravindra, Vishal, Aniket, Liang Chenliang, Jacky Likun, Jarry Qiuheng, David Caiqiang, Eason Linyixin, Ashok, Sujith, Manish, Manohar, Shahid, Ravikiran, Naresh, Krishna, Babu, Ayush, Santosh, Zhangshunyu, Liujunjie, Zhujing (Huawei) • Jean-Baptiste Onofre (Talend, ASF member), Henry Saputra (eBay, ASF member), Uma Maheswara Rao G(Intel, Hadoop PMC)

- 31. Thank you www.huawei.com Copyright©2014 Huawei Technologies Co., Ltd. All Rights Reserved. The information in this document may contain predictive statements including, without limitation, statements regarding the future financial and operating results, future product portfolio, new technology, etc. There are a number of factors that could cause actual results and developments to differ materially from those expressed or implied in the predictive statements. Therefore, such information is provided for reference purpose only and constitutes neither an offer nor an acceptance. Huawei may change the information at any time without notice.

![11

Years Quarters Months Territory Country Quantity Sales

2003 QTR1 Jan EMEA Germany 142 11,432

2003 QTR1 Jan APAC China 541 54,702

2003 QTR1 Jan EMEA Spain 443 44,622

2003 QTR1 Feb EMEA Denmark 545 58,871

2003 QTR1 Feb EMEA Italy 675 56,181

2003 QTR1 Mar APAC India 52 9,749

2003 QTR1 Mar EMEA UK 570 51,018

2003 QTR1 Mar Japan Japan 561 55,245

2003 QTR2 Apr APAC Australia 525 50,398

2003 QTR2 Apr EMEA Germany 144 11,532

[1,1,1,1,1] : [142,11432]

[1,1,1,3,2] : [541,54702]

[1,1,1,1,3] : [443,44622]

[1,1,2,1,4] : [545,58871]

[1,1,2,1,5] : [675,56181]

[1,1,3,3,6] : [52,9749]

[1,1,3,1,7] : [570,51018]

[1,1,3,2,8] : [561,55245]

[1,2,4,3,9] : [525,50398]

[1,2,4,1,1] : [144,11532]

Blocklet

• Data are sorted along MDK (multi-dimensional keys)

• data stored as index in columnar format

Encoding

Blocklet Logical View

Sort

(MDK Index)

[1,1,1,1,1] : [142,11432]

[1,1,1,1,3] : [443,44622]

[1,1,1,3,2] : [541,54702]

[1,1,2,1,4] : [545,58871]

[1,1,2,1,5] : [675,56181]

[1,1,3,1,7] : [570,51018]

[1,1,3,2,8] : [561,55245]

[1,1,3,3,6] : [52,9749]

[1,2,4,1,1] : [144,11532]

[1,2,4,3,9] : [525,50398]

Sorted MDK Index

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

2

2

1

1

1

2

2

3

3

3

4

4

1

1

3

1

1

1

2

3

1

3

142

443

541

545

675

570

561

52

144

525

11432

44622

54702

58871

56181

51018

55245

9749

11532

50398

C1 C2 C3 C4 C5 C6 C7

1

3

2

4

5

7

8

6

1

9](https://image.slidesharecdn.com/oscon-carbondata-may18-1-160901094446/85/Introducing-Apache-Carbon-Data-Hadoop-Native-Columnar-Data-Format-11-320.jpg)

![13

Blocklet Rows

[1|1] :[1|1] :[1|1] :[1|1] :[1|1] : [142]:[11432]

[1|2] :[1|2] :[1|2] :[1|2] :[1|9] : [443]:[44622]

[1|3] :[1|3] :[1|3] :[1|4] :[2|3] : [541]:[54702]

[1|4] :[1|4] :[2|4] :[1|5] :[3|2] : [545]:[58871]

[1|5] :[1|5] :[2|5] :[1|6] :[4|4] : [675]:[56181]

[1|6] :[1|6] :[3|6] :[1|9] :[5|5] : [570]:[51018]

[1|7] :[1|7] :[3|7] :[2|7] :[6|8] : [561]:[55245]

[1|8] :[1|8] :[3|8] :[3|3] :[7|6] : [52]:[9749]

[1|9] :[2|9] :[4|9] :[3|8] :[8|7] : [144]:[11532]

[1|10]:[2|10]:[4|10]:[3|10] :[9|10] : [525]:[50398]

Blocklet

( sort column within column chunk)

Run Length Encoding & Compression

Dim1 Block

1(1-10)

Dim2 Block

1(1-8)

2(9-10)

Dim3 Block

1(1-3)

2(4-5)

3(6-8)

4(9-10)

Dim4 Block

1(1-2,4-6,9)

2(7)

3(3,8,10)

Measure1

Block

Measure2

Block

Dim5 Block

1(1,9)

2(3)

3(2)

4(4)

5(5)

6(8)

7(6)

8(7)

9(10)

Columnar Store

Column chunk Level

inverted Index

[142]:[11432]

[443]:[44622]

[541]:[54702]

[545]:[58871]

[675]:[56181]

[570]:[51018]

[561]:[55245]

[52]:[9749]

[144]:[11532]

[525]:[50398]

Column Chunk Inverted Index

• Optionally store column data as inverted index

within column chunk

• suitable to low cardinality column

• better compression & fast predicate filtering

Blocklet Physical View

1

10

142

443

541

545

675

570

561

52

144

525

11432

44622

54702

58871

56181

51018

55245

9749

11532

50398

C1

d r d r d r d r d r d r

1

10

1

8

2

2

1

10

1

3

2

2

3

3

4

2

1

10

1

6

2

1

3

3

1

2

4

3

9

1

7

1

3

1

…

1

2

2

1

3

1

4

1

5

1

…

1

1

9

1

3

1

2

1

4

1

…

C2 C3 C4 C5 C6 C7](https://image.slidesharecdn.com/oscon-carbondata-may18-1-160901094446/85/Introducing-Apache-Carbon-Data-Hadoop-Native-Columnar-Data-Format-13-320.jpg)

![15

Nested Data Type Representation

• Represented as a composite of two columns

• One column for the element value

• One column for start_index & length of Array

Arrays

• Represented as a composite of finite number

of columns

• Each struct element is a separate column

Struts

Name Array<Ph_Number>

John [192,191]

Sam [121,345,333]

Bob [198,787]

Name Array

[start,len]

Ph_Number

John 0,2 192

Sam 2,3 191

Bob 5,2 121

345

333

198

787

Name Info Strut<age,gender>

John [31,M]

Sam [45,F]

Bob [16,M]

Name Info.age Info.gender

John 31 M

Sam 45 F

Bob 16 M](https://image.slidesharecdn.com/oscon-carbondata-may18-1-160901094446/85/Introducing-Apache-Carbon-Data-Hadoop-Native-Columnar-Data-Format-15-320.jpg)

![19

Spark Integration

• Query CarbonData Table

• DataFrame API

• Spark SQL Statement

• Support schema evolution of Carbon table via ALTER TABLE

• Add, Delete or Rename Column

• schema update only, data stored on disk is untouched

CREATE TABLE [IF NOT EXISTS] [db_name.]table_name [(col_name

data_type [COMMENT col_comment], ...)] [COMMENT table_comment]

[PARTITIONED BY (col_name data_type [COMMENT col_comment],

...)] STORED BY ‘org.carbondata.hive.CarbonHanlder’

[TBLPROPERTIES (property_name=property_value, ...)] [AS

select_statement];](https://image.slidesharecdn.com/oscon-carbondata-may18-1-160901094446/85/Introducing-Apache-Carbon-Data-Hadoop-Native-Columnar-Data-Format-19-320.jpg)

![21

Data Ingestion

• Bulk Data Ingestion

• CSV file conversion

• MDK clustering level: load level vs. node level

• Save Spark dataframe as Carbon data file

df.write

.format("org.apache.spark.CarbonSource")

.options(Map("dbName" -> "db1", "tableName" ->

"tbl1"))

.mode(SaveMode.Overwrite)

.save(“/path”)

LOAD DATA [LOCAL] INPATH 'folder path' [OVERWRITE]

INTO TABLE tablename

OPTIONS(property_name=property_value, ...)

INSERT INTO TABLE tablennme AS select_statement1

FROM table1;](https://image.slidesharecdn.com/oscon-carbondata-may18-1-160901094446/85/Introducing-Apache-Carbon-Data-Hadoop-Native-Columnar-Data-Format-21-320.jpg)