NovaProva, a new generation unit test framework for C programs

- 1. novaprova a new generation unit test framework for C programs www.novaprova.org Greg Banks <[email protected]>

- 2. Overview ● Why Did I Write NovaProva? ● Design Philosophy & Features ● Tutorial + How It Works ● Remaining Work ● End Matter

- 3. Why Did I Write NovaProva? ● scratching my own itch! ● my day job is the Cyrus IMAP server – which is written in C (K&R in some places) – and is large – and old – and complex – and had ZERO buildable tests when I started

- 5. Testing Cyrus ● I added system tests using Perl – http://git.cyrusimap.org/cassandane/ ● I added unit tests using the CUnit library – because it's commonly available – supports C (no C++) – the first few tests were easy – but they got harder and harder

- 6. Is Cyrus Tested Enough? ● code-to-test ratio metric – easily measurable; just enough meaning ● "Code-to-test ratios above 1:2 is a smell, above 1:3 is a stink." – David H. Hansson, creator of Ruby On Rails ● 1:0 is too little – no tests at all ● sweet spot 1:1 to 1:2 – from the zeitgeist on stackexchange.com

- 7. Some Example C projects sweet spot worse sweet spot better

- 8. From Which We Can Conclude ● the SQLite folks need a hobby ● lots of C software is “under” tested ● Cyrus is very under tested ● why!? ● in my experience writing & running more than a few CUnit tests is very hard ● need a better CUnit – NovaProva!

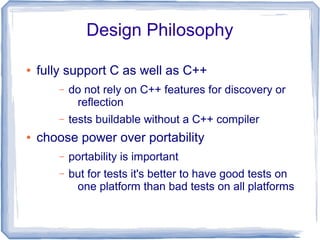

- 9. Design Philosophy ● fully support C as well as C++ – do not rely on C++ features for discovery or reflection – tests buildable without a C++ compiler ● choose power over portability – portability is important – but for tests it's better to have good tests on one platform than bad tests on all platforms

- 10. Design Philosophy (2) ● choose your convenience over my laziness – I put the work into the framework so you don't have to put it into the tests – simplify the tedious parts of testing ● choose correct over fast – slow tests are annoying – but remotely debugging after shipping is much more annoying

- 11. Design Philosophy (3) ● choose best practice as the default – maximise test isolation – maximise failure mode detection ● have the courage to do “impossible” things

- 12. Features ● writing tests is easy ● build integration is easy ● tests are powerful ● test running is reliable ● integrating with other tools is easy

- 13. Writing Tests Is Easy ● test functions are void (void) – returning normally is a success ● use familiar xUnit-like assertion macros – 1st failure terminates the test – like *_FATAL() semantics in CUnit

- 14. Example 01_simple: test /* mytest.c */ #include <np.h> #include "mycode.h" static void test_simple(void) { int r; r = myatoi("42"); NP_ASSERT_EQUAL(r, 42); } assert correct result test function NovaProva header call Code Under Test Code Under Test header

- 15. Writing The Main Is Easy ● no need to register test functions – just name your test function test_foobar() – may be static ● fully automatic test discovery at runtime – uses reflection

- 16. Example 01_simple: main /* testrunner.c */ #include <stdlib.h> #include <np.h> int main(int argc, char **argv) { int ec = 0; np_runner_t *runner = np_init(); ec = np_run_tests(runner, NULL); np_done(runner); exit(ec); } shut down the library run all tests NovaProva header initialise the library

- 17. How Does Reflection Work ● reflection: a program detects facts about (other parts of) itself – all modern languages do this ● “C can't do reflection” – there is no standard API – the compiler doesn't write the info into .o files ● yes it does: -g debug information ● but, that's not standard! ● yes it is: DWARF

- 18. How Does Reflection Work (2) ● the case for DWARF – standard – documents at dwarfstd.org – used with ELF on Linux & Solaris – used with Mach objects on MacOS – compilers already generate it ● using -g easier than patching cc ● but, it's only for the debugger! – it's a general purpose format – describes many artifacts of the compiler's output and maps to source

- 19. How Does Reflection Work (3) ● so I created a reflection API ● provides a high-level API ● reads DWARF info – read /proc/self/exe to find pathname – call dl_iterate_phdr() to walk the runtime linker's map of objects – use libbfd to find ELF sections – use mmap() to map the .debug_* sections – lazily decode the mmap'd DWARF info

- 20. Runtime Test Discovery ● once we have reflection, it's fairly easy ● walk all functions in all compile_units – compare against regexp /^test_(.*)$/ – test name is the 1st submatch – check function has void return & args – testnode name = CU's pathname + test name – make a new testnode, creating ancestors ● prune common nodes from root ● mocks & parameters found the same way

- 21. Build Integration Is Easy ● just a library, no build-time magic – fully C-friendly API – uses C++ internally – but you don't need to compile or link using C++ ● easy to drop into your project – pkg-config .pc file – PKG_CHECK_MODULES in automake ● need to build tests with -g – but not the Code Under Test

- 22. Building Example 01_simple # Makefile NOVAPROVA_CFLAGS= $(shell pkg-config --cflags novaprova) NOVAPROVA_LIBS= $(shell pkg-config --libs novaprova) CFLAGS= -g $(NOVAPROVA_CFLAGS) check: testrunner ./testrunner TEST_SOURCE= mytest.c TEST_OBJS= $(TEST_SOURCE:.c=.o) testrunner: testrunner.o $(TEST_OBJS) $(MYCODE_OBJS) $(LINK.c) -o $@ testrunner.o $(TEST_OBJS) $(MYCODE_OBJS) $(NOVAPROVA_LIBS) the check: target builds & runs pkg-config tells us the right flags need to build with -g very standard link rule for testrunner

- 23. Running Tests Is Reliable ● runs tests under Valgrind – detects many failure modes of C code – turns them into graceful test failures ● strong test isolation – uses UNIX process model – forks a process per test – if one test fails, keeps running other tests – allows for running in parallel – allows for reliable timeouts

- 26. Process Architecture (Valgrind'ed) testrunner (Valgrind'ed) child 1 (Valgrind'ed) child N . . . forkfork runs a test runs a test waits for children

- 27. Running Example 01_simple % make check ./testrunner [0.000] np: starting valgrind np: NovaProva Copyright (c) Gregory Banks np: Built for O/S linux architecture x86 np: running np: running: "mytest.simple" PASS mytest.simple np: 1 run 0 failed check: target builds & runs automatically starts Valgrind By default runs all tests serially post-run summary

- 28. How NP Valgrinds Itself ● Valgrind should be easy & the default, with no build system changes – Valgrind is a CPU+kernel simulator – you can't just start Valgrind on a running process ● build a Valgrind command line in the library and execv() - easy! – downside: main() is run twice – major downside: Valgrind exec loop

- 29. How NP Valgrinds Itself (2) ● Valgrind has an API you can use – #include <valgrind/valgrind.h> – macros that compile to magical NOP instruction sequences – these do nothing on a real CPU, but Valgrind does special things ● predicate RUNNING_ON_VALGRIND – returns true iff the process is running in Valgrind – so the library checks that

- 30. How NP Valgrinds Itself (3) ● to run Valgrind we need argv[] – original, before any getopt() processing – to pass down to the 2nd main() – for maximum convenience and reliability, shouldn't expect main() to pass the correct argv[] down ● global variable _dl_argv – undocumented, undeclared, defined in the runtime linker – set to main()'s argv[], before main()

- 31. Parallel Running ● default mode is to run tests serially ● optionally can run tests in parallel ● call np_set_concurrency() in main – 0 = use all the online CPUs on the machine

- 32. Example 02_parallel int main(int argc, char **argv) { … int concurrency = -1; np_runner_t *runner; … while ((c = getopt(argc, argv, "j:")) >= 0) { switch (c) { case 'j': concurrency = atoi(optarg); … runner = np_init(); if (concurrency >= 0) np_set_concurrency(runner, concurrency); ec = np_run_tests(runner, NULL); parse -j option

- 33. Running Example 02_parallel ./testrunner -j4 … np: running np: running: "mytest.000" np: running: "mytest.001" np: running: "mytest.002" np: running: "mytest.003" Running test 0 Running test 1 Running test 2 Running test 3 Finished test 0 Finished test 1 Finished test 2 Finished test 3 PASS mytest.000 PASS mytest.002 PASS mytest.003 PASS mytest.001 np: 4 run 0 failed request 4 tests in parallel runs all tests in parallel post-run summary

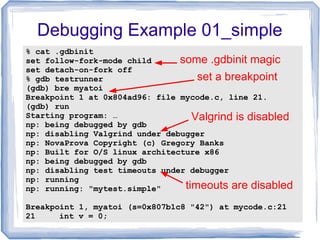

- 34. Using GDB ● NP detects when it's running in gdb – automatically disables test timeout – automatically disables Valgrind ● but you have to set some gdb options, sorry! – always runs the test in a fork()ed child – set follow-fork-mode child – set detach-on-fork off

- 35. Debugging Example 01_simple % cat .gdbinit set follow-fork-mode child set detach-on-fork off % gdb testrunner (gdb) bre myatoi Breakpoint 1 at 0x804ad96: file mycode.c, line 21. (gdb) run Starting program: … np: being debugged by gdb np: disabling Valgrind under debugger np: NovaProva Copyright (c) Gregory Banks np: Built for O/S linux architecture x86 np: being debugged by gdb np: disabling test timeouts under debugger np: running np: running: "mytest.simple" Breakpoint 1, myatoi (s=0x807b1c8 "42") at mycode.c:21 21 int v = 0; set a breakpoint some .gdbinit magic Valgrind is disabled timeouts are disabled

- 36. How NP Detects GDB ● sometimes the library needs to know if it's running under GDB – need to disable test timeouts – need to avoid running self under Valgrind because gdb & Valgrind don't play together ● on Linux, gdb uses ptrace() to attach itself to a process – a process knows if it's being traced – /proc/$pid/status shows "TracerPid:"

- 37. How NP Detects GDB (2) ● read /proc/self/status to see if we're ptraced – downside: other utilities like strace also use ptrace() ● we also use /proc/$pid/cmdline to discover the tracer's argv[0] – and compare this to a whitelist of debugger executables – yes there is /proc/$pid/exe symlink, but it's not always readable

- 38. Using Jenkins CI ● Jenkins reads, tracks & displays test results – in jUnit XML format ● http://ci.cyrusimap.org/

- 39. Using Jenkins CI (2) ● NP optionally emits jUnit XML report ● call np_set_output_format() in main – an XML file is written for each suite – to reports/TEST-$suite.xml

- 40. Example 03_jenkins int main(int argc, char **argv) { int ec = 0; const char *output_format = 0; np_runner_t *runner; … while ((c = getopt(argc, argv, "f:")) >= 0) { switch (c) { case 'f': output_format = optarg; break; … runner = np_init(); if (output_format) np_set_output_format(runner, output_format); parse the -f option name of the output format

- 41. Running Example 03_jenkins % mkdir reports % ./testrunner -f junit [0.000] np: starting valgrind np: NovaProva Copyright (c) Gregory Banks np: Built for O/S linux architecture x86 % ls reports/ TEST-mytest.xml % xmllint --format reports/TEST-mytest.xml <?xml version="1.0" encoding="UTF-8"?> <testsuite name="mytest" failures="0" tests="1" hostname="…" timestamp="2013-01-28T12:15:39" errors="0" time="0.882"> <properties/> <testcase name="simple" classname="simple" time="0.882"/> <system-out/> <system-err/> </testsuite> Use the -f option instead a new XML file no post-run summary this doesn't work yet

- 42. Fixtures & N/A ● supports a Not Applicable test result – so a test can decide that preconditions aren't met – counted separately from passes and failures ● supports fixtures – set_up function is called before every test – tear_down function is called after every test – discovered at runtime using reflection – will be fixed in 1.2, sorry

- 43. Example 04_fixtures static int set_up(void) { fprintf(stderr, "Setting up fixturesn"); return 0; } static int tear_down(void) { fprintf(stderr, "Tearing down fixturesn"); return 0; } just call a function set_up and tear_down

- 44. Running Example 04_fixtures np: running np: running: "mytest.one" Setting up fixtures Running test_one Finished test_one Tearing down fixtures PASS mytest.one np: running: "mytest.two" Setting up fixtures Running test_two Finished test_two Tearing down fixtures PASS mytest.two np: 2 run 0 failed set_up runs before test tear_down runs after test each test

- 45. Tests Are A Tree ● xUnit implementations have a 2-level organisation – test – the smallest runnable element – suite – group of tests ● software just isn't that simple anymore ● NP uses an arbitrary depth tree of testnodes – single root testnode – each testnode has a full pathname – separated by '.'

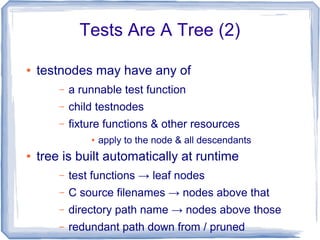

- 46. Tests Are A Tree (2) ● testnodes may have any of – a runnable test function – child testnodes – fixture functions & other resources ● apply to the node & all descendants ● tree is built automatically at runtime – test functions → leaf nodes – C source filenames → nodes above that – directory path name → nodes above those – redundant path down from / pruned

- 47. Tests Are A Tree (3) ● tests are named in a way which naturally reflects your source code organisation ● unique test names, no effort ● flexible scoping/containment mechanism

- 48. Example 05_tree /* startrek/tng/federation/enterprise.c */ static void test_torpedoes(void) { fprintf(stderr, "Testing photon torpedoesn"); } /* startrek/tng/klingons/neghvar.c */ static void test_disruptors(void) { fprintf(stderr, "Testing disruptorsn"); } /* starwars/episode4/rebels/xwing.c */ static void test_lasers(void) { fprintf(stderr, "Testing laser cannonn"); }

- 49. Running Example 05_tree np: running np: running: "05_tree.startrek.tng.federation.enterprise.torpedoes" Testing photon torpedoes PASS 05_tree.startrek.tng.federation.enterprise.torpedoes np: running: "05_tree.startrek.tng.klingons.neghvar.disruptors" Testing disruptors PASS 05_tree.startrek.tng.klingons.neghvar.disruptors np: running: "05_tree.starwars.episode4.rebels.xwing.lasers" Testing laser cannon PASS 05_tree.starwars.episode4.rebels.xwing.lasers np: 3 run 0 failed function .c filename directory path name

- 50. Function Mocking ● mocking a function = arrange for Code under Test to call a special test function instead of some normal part of CuT ● dynamic: inserted at runtime not link time – uses a breakpoint-like mechanism – when running under gdb need to do handle SIGSEGV nostop noprint ● mocks may be setup declaratively – name a function mock_foo() & it mocks foo()

- 51. Example 06_mock: CuT int myatoi(const char *s) { int v = 0; for ( ; *s ; s++) { v *= 10; v += mychar2int(*s); } return v; } int mychar2int(int c) { return (c - '0'); } this function this one calls

- 52. Example 06_mock: Test static void test_simple(void) { int r; r = myatoi("42"); NP_ASSERT_EQUAL(r, 42); } int mock_mychar2int(int c) { fprintf(stderr, "Mocked mychar2int('%c') calledn", c); return (c - '0'); } test calls myatoi which calls mychar2int this mocks mychar2int

- 53. Running Example 06_mock ./testrunner [0.000] np: starting valgrind np: NovaProva Copyright (c) Gregory Banks np: Built for O/S linux architecture x86 np: running np: running: "mytest.simple" Mocked mychar2int('4') called Mocked mychar2int('2') called PASS mytest.simple np: 1 run 0 failed the mock is called instead

- 54. More On Function Mocking ● mocks may be added at runtime – by name or by function pointer – from tests or fixtures – so you can mock for only part of a test ● mocks may be attached to any testnode – automatically installed before any test below that node starts – automatically removed afterward

- 55. Intercepts = Mocks++ ● more general than function mocks ● OO interface – only available from C++ ● before() virtual function – called before the real function – may change parameters – may redirect to another function (mocks!) ● after() virtual function – called after real function returns – may change return value

- 56. How Intercepts Work ● a 1-process adaption of how GDB does breakpoints ● less general – only works on function entry – doesn't handle recursive functions – not very good with threads either

- 57. How Intercepts Work (2) .text process ● intercepts have an install step ● track and refcount installed intercepts by address ● on first install... r-x target function

- 58. Installing An Intercept .text process rwx ● mprotect() → PROT_WRITE ● track and refcount pages ● install a SIGSEGV handler ● overwrite the 1st byte of the function ● 0xf4 = HLT r-x target function

- 59. Calling An Intercepted Function .text process rwx ● the function is called ● CPU traps on HLT ● kernel delivers SIGSEGV ● signal handler is not a normal function! – extra stuff on the stack – signals blocked – return → magic r-x signal handler

- 60. Signal Handler ● signal handlers get a ucontext_t struct – containing the machine register state – modifiable by the handler! ● check insn at EIP is HLT – SIGSEGV can also come from bugs! ● stash a copy of the ucontext_t ● set EIP in the ucontext_t to a trampoline ● return from signal handler ● libc+kernel “resumes” to our trampoline

- 61. Intercept Trampoline ● now out of signal handler context – stack has the args for the target function – but running the trampoline ● set up a fake stack frame ● call the intercept's before() ● call the target function with fake frame – or another function entirely ● call the intercept's after() ● clean up & return to target's caller

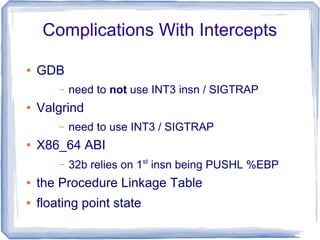

- 62. Complications With Intercepts ● GDB – need to not use INT3 insn / SIGTRAP ● Valgrind – need to use INT3 / SIGTRAP ● X86_64 ABI – 32b relies on 1st insn being PUSHL %EBP ● the Procedure Linkage Table ● floating point state

- 63. Parameterised Tests ● when you want to run the same test several times with a different parameter ● macro NP_PARAMETER – declares a static string variable – and all its possible values ● attached to any node in the test tree ● discovered at runtime using reflection ● tests under that node are run once for each parameter value combination

- 64. Example 07_parameter NP_PARAMETER(pastry, "donut,bearclaw,danish"); static void test_munchies(void) { fprintf(stderr, "I'd love a %sn", pastry); } defines a static variable just use the variable as a char* and all its values

- 65. Example 07_parameter np: running np: running: "mytest.munchies[pastry=donut]" I'd love a donut PASS mytest.munchies[pastry=donut] np: running: "mytest.munchies[pastry=bearclaw]" I'd love a bearclaw PASS mytest.munchies[pastry=bearclaw] np: running: "mytest.munchies[pastry=danish]" I'd love a danish PASS mytest.munchies[pastry=danish] np: 3 run 0 failed

- 66. Example 13_twoparams NP_PARAMETER(pastry, "donut,bearclaw,danish"); NP_PARAMETER(beverage, "tea,coffee"); static void test_munchies(void) { fprintf(stderr, "I'd love a %s with my %sn", pastry, beverage); }

- 67. Example 13_twoparams np: running: "mytest.munchies[pastry=donut][beverage=tea]" I'd love a donut with my tea PASS mytest.munchies[pastry=donut][beverage=tea] np: running: "mytest.munchies[pastry=bearclaw][beverage=tea]" I'd love a bearclaw with my tea PASS mytest.munchies[pastry=bearclaw][beverage=tea] np: running: "mytest.munchies[pastry=danish][beverage=tea]" I'd love a danish with my tea PASS mytest.munchies[pastry=danish][beverage=tea] np: running: "mytest.munchies[pastry=donut][beverage=coffee]" I'd love a donut with my coffee PASS mytest.munchies[pastry=donut][beverage=coffee] np: running: "mytest.munchies[pastry=bearclaw] [beverage=coffee]" I'd love a bearclaw with my coffee PASS mytest.munchies[pastry=bearclaw][beverage=coffee] np: running: "mytest.munchies[pastry=danish][beverage=coffee]" I'd love a danish with my coffee PASS mytest.munchies[pastry=danish][beverage=coffee] np: 6 run 0 failed

- 68. Memory Access Violations ● e.g. following a null pointer ● Valgrind notices first and emits a useful analysis – stack trace – line numbers – fault address – info about where the fault address points ● child process dies with SIGSEGV or SIGBUS ● test runner process reaps it & fails the test

- 69. Example 08_segv np: running: "mytest.segv" About to follow a NULL pointer ==32587== Invalid write of size 1 ==32587== at 0x804AD40: test_segv (mytest.c:22) … ==32587== by 0x804DEF6: np_run_tests (runner.cxx:665) ==32587== by 0x804ACF6: main (testrunner.c:31) ==32587== Address 0x0 is not stack'd, malloc'd or (recently) free'd ==32587== Process terminating with default action of signal 11 (SIGSEGV) ==32587== Access not within mapped region at address 0x0 EVENT SIGNAL child process 32587 died on signal 11 FAIL mytest.crash_and_burn np: 1 run 1 failed Valgrind report test fails gracefully

- 70. Other Memory Issues ● buffer overruns, use of uninitialised variables – discovered by Valgrind, as they happen – which emits its usual helpful analysis ● memory leaks – discovered by Valgrind, explicit leak check after each test completes ● test process queries Valgrind – unsuppressed errors → the test has failed

- 71. Example 09_memleak np: running: "mytest.memleak" About to do leak 32 bytes from malloc() Test ends ==779== 32 bytes in 1 blocks are definitely lost in loss record 9 of 54 ==779== at 0x4026FDE: malloc … ==779== by 0x804AD46: test_memleak (mytest.c:23) … ==779== by 0x804DEFA: np_run_tests (runner.cxx:665) ==779== by 0x804ACF6: main (testrunner.c:31) EVENT VALGRIND 32 bytes of memory leaked FAIL mytest.memleak np: 1 run 1 failed Valgrind report test fails

- 72. Code Under Test Calls exit() ● NP library defines an exit() – yuck, should use dynamic function intercept ● calling exit() during a test – prints a stack trace – fails the test ● calling exit() outside a test – calls _exit()

- 73. Example 10_exit np: running: "mytest.exit" About to call exit(37) EVENT EXIT exit(37) at 0x8056522: np::spiegel::describe_stacktrace by 0x804BD9C: np::event_t::with_stack by 0x804B0CE: exit by 0x804AD42: test_exit by 0x80561F0: np::spiegel::function_t::invoke by 0x804C3A5: np::runner_t::run_function by 0x804D28A: np::runner_t::run_test_code by 0x804D4F7: np::runner_t::begin_job by 0x804DD9A: np::runner_t::run_tests by 0x804DEF2: np_run_tests by 0x804ACF2: main FAIL mytest.exit np: 1 run 1 failed stacktrace from NovaProva test fails gracefully

- 74. Code Under Test Emits Syslogs ● dynamic function intercept on syslog() – installed before each test – uninstalled after each test ● test may register regexps for expected syslogs ● unexpected syslogs fail the test

- 75. Running Example 11_syslog np: running: "mytest.unexpected" EVENT SLMATCH err: This message shouldn't happen at 0x8059B22: np::spiegel::describe_stacktrace by 0x804DD9C: np::event_t::with_stack by 0x804B9FC: np::mock_syslog by 0x807A519: np::spiegel::platform::intercept_tramp by 0x804B009: test_unexpected by 0x80597F0: np::spiegel::function_t::invoke by 0x804FB99: np::runner_t::run_function by 0x8050A7E: np::runner_t::run_test_code by 0x8050CEB: np::runner_t::begin_job by 0x805158E: np::runner_t::run_tests by 0x80516E6: np_run_tests by 0x804AFC3: main FAIL mytest.unexpected stack trace test fails

- 76. Example 11_syslog (2) static void test_expected(void) { np_syslog_ignore("entirely expected"); syslog(LOG_ERR, "This message was entirely expected"); } Have to tell NP that a syslog might happen

- 77. Running Example 11_syslog (2) np: running: "mytest.expected" EVENT SYSLOG err: This message was entirely expected at 0x8059B22: np::spiegel::describe_stacktrace by 0x804DD9C: np::event_t::with_stack by 0x804BABA: np::mock_syslog by 0x807A519: np::spiegel::platform::intercept_tramp by 0x804B031: test_expected by 0x80597F0: np::spiegel::function_t::invoke by 0x804FB99: np::runner_t::run_function by 0x8050A7E: np::runner_t::run_test_code by 0x8050CEB: np::runner_t::begin_job by 0x805158E: np::runner_t::run_tests by 0x80516E6: np_run_tests by 0x804AFC3: main PASS mytest.expected stack trace but test passes

- 78. Slow & Looping Tests ● per-test timeout – test fails if the timeout fires ● default timeout 30 sec – 3 x when running under Valgrind – disabled when running under gdb ● implemented in the testrunner process – child killed with SIGTERM

- 79. Example 12_timeout np: running: "mytest.slow" Test runs for 100 seconds Have been running for 0 sec Have been running for 10 sec Have been running for 20 sec Have been running for 30 sec Have been running for 40 sec Have been running for 50 sec Have been running for 60 sec Have been running for 70 sec Have been running for 80 sec EVENT TIMEOUT Child process 2294 timed out, killing EVENT SIGNAL child process 2294 died on signal 15 FAIL mytest.slow np: 1 run 1 failed test times out after 90 sec test fails gracefully runner kills child process

- 80. Choosing A Project Name Is Hard ● CUnit has an obvious name – Unit testing for C – but … dangerous ● it took me 2 months to name NovaProva – .org domain not taken – name didn't sound silly – sounded vaguely appropriate ● NovaProva ~= “new test” in Italian

- 81. Remaining Work ● provide an API or a default main() routine to automatically parse a set of default command line options, e.g. --list, to simplify building a testrunner ● class/hashtag concept for test functions – a syntax for specifying these on the command line – how to attach these to test functions in any kind of natural and reliable way?

- 82. Remaining Work (2) ● failpoints – a way of automatically failing and terminating a test if a given function is called, like exit() ● improve the syntax for specifying tests on the command line to – be more flexible e.g. like CSS or XPATH – allow specifying values for test parameters ● do a better job of running tests in parallel ● .deb and .rpm packages

- 83. Remaining Work (3) ● port to OS X & Solaris. ● an API for time warping ● an API for timezone manipulation ● an API for locale manipulation ● detect file descriptor leaks ● detect environment variable leaks ● discovery of tests written in C++ ● handle C++ exceptions

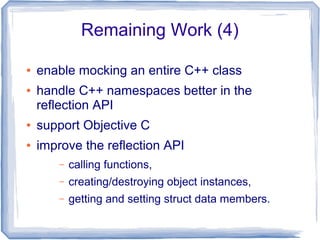

- 84. Remaining Work (4) ● enable mocking an entire C++ class ● handle C++ namespaces better in the reflection API ● support Objective C ● improve the reflection API – calling functions, – creating/destroying object instances, – getting and setting struct data members.

- 85. Thanks ● Opera Software – for letting me open source NovaProva ● Cosimo Streppone – for being Italian ● Andrew Wansink – for putting me onto CUnit ● Previous LCA conferences – for giving me testing religion

- 86. Where To Get It ● www.novaprova.org ● working on binary packages (OBS) ● That's all folks!

Editor's Notes

- #5: Jul 1993: first checkins - zero unit tests, zero system tests ... 8779 commits by ~60 people (11 with &gt; 100 commits, 4 with &gt; 1000) ... Oct 2010: I started working on Cyrus - zero unit tests zero system tests Nov 2010: I wrote the first unit test

![Process Architecture

(Valgrind'ed)

testrunner

execv(/usr/bin/valgrind, argv[])](https://image.slidesharecdn.com/lca2013-novaprova-final-150327224024-conversion-gate01/85/NovaProva-a-new-generation-unit-test-framework-for-C-programs-25-320.jpg)

![Running Example 01_simple

% make check

./testrunner

[0.000] np: starting valgrind

np: NovaProva Copyright (c) Gregory Banks

np: Built for O/S linux architecture x86

np: running

np: running: "mytest.simple"

PASS mytest.simple

np: 1 run 0 failed

check: target builds & runs

automatically starts Valgrind

By default runs

all tests serially

post-run summary](https://image.slidesharecdn.com/lca2013-novaprova-final-150327224024-conversion-gate01/85/NovaProva-a-new-generation-unit-test-framework-for-C-programs-27-320.jpg)

![How NP Valgrinds Itself (3)

● to run Valgrind we need argv[]

– original, before any getopt() processing

– to pass down to the 2nd

main()

– for maximum convenience and reliability,

shouldn't expect main() to pass the correct

argv[] down

● global variable _dl_argv

– undocumented, undeclared, defined in the

runtime linker

– set to main()'s argv[], before main()](https://image.slidesharecdn.com/lca2013-novaprova-final-150327224024-conversion-gate01/85/NovaProva-a-new-generation-unit-test-framework-for-C-programs-30-320.jpg)

![How NP Detects GDB (2)

● read /proc/self/status to see if we're ptraced

– downside: other utilities like strace also use

ptrace()

● we also use /proc/$pid/cmdline to discover the

tracer's argv[0]

– and compare this to a whitelist of debugger

executables

– yes there is /proc/$pid/exe symlink, but it's not

always readable](https://image.slidesharecdn.com/lca2013-novaprova-final-150327224024-conversion-gate01/85/NovaProva-a-new-generation-unit-test-framework-for-C-programs-37-320.jpg)

![Running Example 03_jenkins

% mkdir reports

% ./testrunner -f junit

[0.000] np: starting valgrind

np: NovaProva Copyright (c) Gregory Banks

np: Built for O/S linux architecture x86

% ls reports/

TEST-mytest.xml

% xmllint --format reports/TEST-mytest.xml

<?xml version="1.0" encoding="UTF-8"?>

<testsuite name="mytest" failures="0" tests="1"

hostname="…" timestamp="2013-01-28T12:15:39" errors="0"

time="0.882">

<properties/>

<testcase name="simple" classname="simple"

time="0.882"/>

<system-out/>

<system-err/>

</testsuite>

Use the -f option

instead a new XML file

no post-run

summary

this doesn't work yet](https://image.slidesharecdn.com/lca2013-novaprova-final-150327224024-conversion-gate01/85/NovaProva-a-new-generation-unit-test-framework-for-C-programs-41-320.jpg)

![Running Example 06_mock

./testrunner

[0.000] np: starting valgrind

np: NovaProva Copyright (c) Gregory Banks

np: Built for O/S linux architecture x86

np: running

np: running: "mytest.simple"

Mocked mychar2int('4') called

Mocked mychar2int('2') called

PASS mytest.simple

np: 1 run 0 failed

the mock is

called instead](https://image.slidesharecdn.com/lca2013-novaprova-final-150327224024-conversion-gate01/85/NovaProva-a-new-generation-unit-test-framework-for-C-programs-53-320.jpg)

![Example 07_parameter

np: running

np: running: "mytest.munchies[pastry=donut]"

I'd love a donut

PASS mytest.munchies[pastry=donut]

np: running: "mytest.munchies[pastry=bearclaw]"

I'd love a bearclaw

PASS mytest.munchies[pastry=bearclaw]

np: running: "mytest.munchies[pastry=danish]"

I'd love a danish

PASS mytest.munchies[pastry=danish]

np: 3 run 0 failed](https://image.slidesharecdn.com/lca2013-novaprova-final-150327224024-conversion-gate01/85/NovaProva-a-new-generation-unit-test-framework-for-C-programs-65-320.jpg)

![Example 13_twoparams

np: running: "mytest.munchies[pastry=donut][beverage=tea]"

I'd love a donut with my tea

PASS mytest.munchies[pastry=donut][beverage=tea]

np: running: "mytest.munchies[pastry=bearclaw][beverage=tea]"

I'd love a bearclaw with my tea

PASS mytest.munchies[pastry=bearclaw][beverage=tea]

np: running: "mytest.munchies[pastry=danish][beverage=tea]"

I'd love a danish with my tea

PASS mytest.munchies[pastry=danish][beverage=tea]

np: running: "mytest.munchies[pastry=donut][beverage=coffee]"

I'd love a donut with my coffee

PASS mytest.munchies[pastry=donut][beverage=coffee]

np: running: "mytest.munchies[pastry=bearclaw]

[beverage=coffee]"

I'd love a bearclaw with my coffee

PASS mytest.munchies[pastry=bearclaw][beverage=coffee]

np: running: "mytest.munchies[pastry=danish][beverage=coffee]"

I'd love a danish with my coffee

PASS mytest.munchies[pastry=danish][beverage=coffee]

np: 6 run 0 failed](https://image.slidesharecdn.com/lca2013-novaprova-final-150327224024-conversion-gate01/85/NovaProva-a-new-generation-unit-test-framework-for-C-programs-67-320.jpg)