Spring data iii

- 1. M.C. Kang

- 3. Hello World Using Spring for Apache Hadoop Declaring a Hadoop job using Spring’s Hadoop namespace <configuration> fs.default.name=hdfs://localhost:9000 </configuration> <job id="wordcountJob" input-path="/user/gutenberg/input" output-path="/user/gutenberg/output" mapper="org.apache.hadoop.examples.WordCount.TokenizerMapper" reducer="org.apache.hadoop.examples.WordCount.IntSumReducer"/> <job-runner id="runner" job="wordcountJob" run-at-startup="true"/> This configuration will create a singleton instance of an org.apache.hadoop.mapreduce.Job managed by the Spring container. Spring can determine that outputKeyClass is of the type org.apache.hadoop.io.Text and that outputValueClass is of type org.apache.hadoop.io.IntWritable, so we do not need to set these properties explicitly. public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable>{ private final static IntWritable one = new IntWritable(1); private Text word = new Text(); public void map(Object key, Text value, Context context) throws IOException, InterruptedException { StringTokenizer itr = new StringTokenizer(value.toString()); while (itr.hasMoreTokens()) { word.set(itr.nextToken()); context.write(word, one); } } }

- 4. Hello World Using Spring for Apache Hadoop public static class IntSumReducer extends Reducer<Text,IntWritable,Text,IntWritable> { private IntWritable result = new IntWritable(); public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException { int sum = 0; for (IntWritable val : values) { sum += val.get(); } result.set(sum); context.write(key, result); } } public class Main { private static final String[] CONFIGS = new String[] {"META-INF/spring/hadoop- context.xml" }; public static void main(String[] args) { String[] res = (args != null && args.length > 0 ? args : CONFIGS); AbstractApplicationContext ctx = new ClassPathXmlApplicationContext(res); // shut down the context cleanly along with the VM ctx.registerShutdownHook(); } }

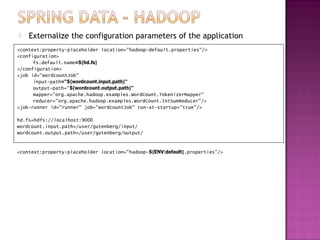

- 5. Externalize the configuration parameters of the application <context:property-placeholder location="hadoop-default.properties"/> <configuration> fs.default.name=${hd.fs} </configuration> <job id="wordcountJob" input-path="${wordcount.input.path}" output-path="${wordcount.output.path}" mapper="org.apache.hadoop.examples.WordCount.TokenizerMapper" reducer="org.apache.hadoop.examples.WordCount.IntSumReducer"/> <job-runner id="runner" job="wordcountJob" run-at-startup="true"/> hd.fs=hdfs://localhost:9000 wordcount.input.path=/user/gutenberg/input/ wordcount.output.path=/user/gutenberg/output/ <context:property-placeholder location="hadoop-${ENV:default}.properties"/>

- 6. Scripting HDFS on the JVM – Type1 <context:property-placeholder location="hadoop.properties"/> <configuration> fs.default.name=${hd.fs} </configuration> <script id="setupScript" location="copy-files.groovy"> <property name="localSourceFile" value="${localSourceFile}"/> <property name=“hdfsInputDir" value="${hdfsInputDir}"/> <property name=“hdfsOutputDir" value="${hdfsOutputDir}"/> </script> Groovy Script if (!fsh.test(hdfsInputDir)) { fsh.mkdir(hdfsInputDir); fsh.copyFromLocal(localSourceFile, hdfsInputDir); fsh.chmod(700, hdfsInputDir) } if (fsh.test(hdfsOutputDir)) { fsh.rmr(hdfsOutputDir) }

- 7. Combining HDFS Scripting and Job Submission <context:property-placeholder location="hadoop.properties"/> <configuration> fs.default.name=${hd.fs} </configuration> <job id="wordcountJob" input-path="${wordcount.input.path}" output-path="${wordcount.output.path}" mapper="org.apache.hadoop.examples.WordCount.TokenizerMapper" reducer="org.apache.hadoop.examples.WordCount.IntSumReducer"/> <script id="setupScript" location="copy-files.groovy"> <property name="localSourceFile" value="${localSourceFile}"/> <property name="inputDir" value="${wordcount.input.path}"/> <property name="outputDir" value="${wordcount.output.path}"/> </script> <job-runner id="runner" run-at-startup="true" pre-action="setupScript" job="wordcountJob"/>

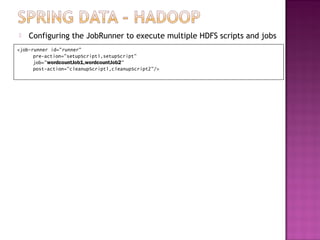

- 8. Configuring the JobRunner to execute multiple HDFS scripts and jobs <job-runner id="runner" pre-action="setupScript1,setupScript" job="wordcountJob1,wordcountJob2" post-action="cleanupScript1,cleanupScript2"/>

- 9. Scheduling MapReduce Jobs with a TaskScheduler <!-- job definition as before --> <job id="wordcountJob" ... /> <!-- script definition as before --> <script id="setupScript" ... /> <job-runner id="runner" pre-action="setupScript" job="wordcountJob"/> <task:scheduled-tasks> <task:scheduled ref="runner" method="call" cron="3/30 * * * * ?"/> </task:scheduled-tasks> Scheduling MapReduce Jobs with Quartz <bean id="jobDetail" class="org.springframework.scheduling.quartz.MethodInvokingJobDetailFactoryBean"> <property name="targetObject" ref="runner"/> <property name="targetMethod" value="run"/> </bean> <bean id="cronTrigger" class="org.springframework.scheduling.quartz.CronTriggerBean"> <property name="jobDetail" ref="jobDetail"/> <property name="cronExpression" value="3/30 * * * * ?"/> </bean> <bean class="org.springframework.scheduling.quartz.SchedulerFactoryBean"> <property name="triggers" ref="cronTrigger"/> </bean>

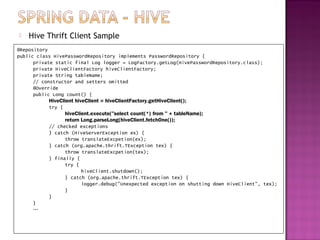

- 11. Creating and configuring a Hive server <context:property-placeholder location="hadoop.properties,hive.properties" /> <configuration id="hadoopConfiguration"> fs.default.name=${hd.fs} mapred.job.tracker=${mapred.job.tracker} </configuration> <hive-server port="${hive.port}" auto-startup="false" configuration-ref="hadoopConfiguration" properties-location="hive-server.properties"> hive.exec.scratchdir=/tmp/hive/ </hive-server> Hive Thrift Client since the HiveClient is not a thread-safe class, so a new instance needs to be created inside methods that are shared across multiple threads. <hive-client-factory host="${hive.host}" port="${hive.port}"/>

- 12. Hive Thrift Client Sample @Repository public class HivePasswordRepository implements PasswordRepository { private static final Log logger = LogFactory.getLog(HivePasswordRepository.class); private HiveClientFactory hiveClientFactory; private String tableName; // constructor and setters omitted @Override public Long count() { HiveClient hiveClient = hiveClientFactory.getHiveClient(); try { hiveClient.execute("select count(*) from " + tableName); return Long.parseLong(hiveClient.fetchOne()); // checked exceptions } catch (HiveServerException ex) { throw translateExcpetion(ex); } catch (org.apache.thrift.TException tex) { throw translateExcpetion(tex); } finally { try { hiveClient.shutdown(); } catch (org.apache.thrift.TException tex) { logger.debug("Unexpected exception on shutting down HiveClient", tex); } } } …

- 13. Hive JDBC Client The JDBC support for Hive lets you use your existing Spring knowledge of JdbcTemplate to interact with Hive. Hive provides a HiveDriver class. <bean id="hiveDriver" class="org.apache.hadoop.hive.jdbc.HiveDriver" /> <bean id="dataSource" class="org.springframework.jdbc.datasource.SimpleDriverDataSource"> <constructor-arg name="driver" ref="hiveDriver" /> <constructor-arg name="url" value="${hive.url}"/> </bean> <bean id="jdbcTemplate" class="org.springframework.jdbc.core.simple.JdbcTemplate"> <constructor-arg ref="dataSource" /> </bean> Hive JDBC Client Sample @Repository public class JdbcPasswordRepository implements PasswordRepository { private JdbcOperations jdbcOperations; private String tableName; // constructor and setters omitted @Override public Long count() { return jdbcOperations.queryForLong("select count(*) from " + tableName); } …

- 14. Hive Script Runner <context:property-placeholder location="hadoop.properties,hive.properties"/> <configuration> fs.default.name=${hd.fs} mapred.job.tracker=${mapred.job.tracker} </configuration> <hive-server port="${hive.port}" properties-location="hive-server.properties"/> <hive-client-factory host="${hive.host}" port="${hive.port}"/> <hive-runner id="hiveRunner" run-at-startup="false" > <script location="apache-log-simple.hql"> <arguments> hiveContribJar=${hiveContribJar} localInPath="./data/apache.log" </arguments> </script> </hive-runner>

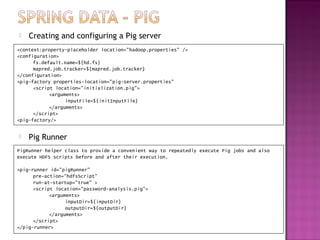

- 16. Creating and configuring a Pig server <context:property-placeholder location="hadoop.properties" /> <configuration> fs.default.name=${hd.fs} mapred.job.tracker=${mapred.job.tracker} </configuration> <pig-factory properties-location="pig-server.properties" <script location="initialization.pig"> <arguments> inputFile=${initInputFile} </arguments> </script> <pig-factory/> Pig Runner PigRunner helper class to provide a convenient way to repeatedly execute Pig jobs and also execute HDFS scripts before and after their execution. <pig-runner id="pigRunner" pre-action="hdfsScript" run-at-startup="true" > <script location="password-analysis.pig"> <arguments> inputDir=${inputDir} outputDir=${outputDir} </arguments> </script> </pig-runner>

- 17. Pig Template <context:property-placeholder location="hadoop.properties" /> <configuration> fs.default.name=${hd.fs} mapred.job.tracker=${mapred.job.tracker} </configuration> <pig-factory properties-location="pig-server.properties" <script location="initialization.pig"> <arguments> inputFile=${initInputFile} </arguments> </script> <pig-factory/> Pig Runner PigRunner helper class to provide a convenient way to repeatedly execute Pig jobs and also execute HDFS scripts before and after their execution. <pig-runner id="pigRunner" pre-action="hdfsScript" run-at-startup="true" > <script location="password-analysis.pig"> <arguments> inputDir=${inputDir} outputDir=${outputDir} </arguments> </script> </pig-runner>

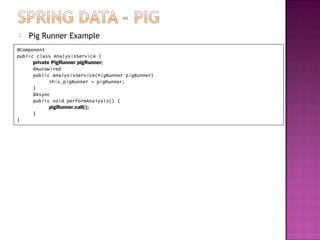

- 18. Pig Runner Example @Component public class AnalysisService { private PigRunner pigRunner; @Autowired public AnalysisService(PigRunner pigRunner) this.pigRunner = pigRunner; } @Async public void performAnalysis() { pigRunner.call(); } }

- 19. Controlling Runtime Script Execution To have more runtime control over what Pig scripts are executed and the arguments passed into them, we can use the PigTemplate class. <pig-factory properties-location="pig-server.properties"/> <pig-template/> <beans:bean id="passwordRepository“ class="com.oreilly.springdata.hadoop.pig.PigPasswordRepository"> <beans:constructor-arg ref="pigTemplate"/> </beans:bean> public class PigPasswordRepository implements PasswordRepository { private PigOperations pigOperations; private String pigScript = "classpath:password-analysis.pig"; public void processPasswordFile(String inputFile) { Assert.notNull(inputFile); String outputDir = PathUtils.format("/data/password-repo/output/%1$tY/%1$tm/%1$td/%1$tH/%1$tM/ %1$tS"); Properties scriptParameters = new Properties(); scriptParameters.put("inputDir", inputFile); scriptParameters.put("outputDir", outputDir); pigOperations.executeScript(pigScript, scriptParameters); } @Override public void processPasswordFiles(Collection<String> inputFiles) { for (String inputFile : inputFiles) { processPasswordFile(inputFile); } } }

- 21. HBASE Java Client w/o Spring Data The HTable class is the main way in Java to interact with HBase. It allows you to put data into a table using a Put class, get data by key using a Get class, and delete data using a Delete class. You query that data using a Scan class, which lets you specify key ranges as well as filter criteria. Configuration configuration = new Configuration(); // Hadoop configuration object HTable table = new HTable(configuration, "users"); Put p = new Put(Bytes.toBytes("user1")); p.add(Bytes.toBytes("cfInfo"), Bytes.toBytes("qUser"), Bytes.toBytes("user1")); p.add(Bytes.toBytes("cfInfo"), Bytes.toBytes("qEmail"), Bytes.toBytes("[email protected]")); p.add(Bytes.toBytes("cfInfo"), Bytes.toBytes("qPassword"), Bytes.toBytes("user1pwd")); table.put(p);

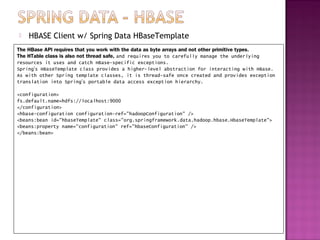

- 22. HBASE Client w/ Spring Data HBaseTemplate The HBase API requires that you work with the data as byte arrays and not other primitive types. The HTable class is also not thread safe, and requires you to carefully manage the underlying resources it uses and catch HBase-specific exceptions. Spring’s HBaseTemplate class provides a higher-level abstraction for interacting with HBase. As with other Spring template classes, it is thread-safe once created and provides exception translation into Spring’s portable data access exception hierarchy. <configuration> fs.default.name=hdfs://localhost:9000 </configuration> <hbase-configuration configuration-ref="hadoopConfiguration" /> <beans:bean id="hbaseTemplate" class="org.springframework.data.hadoop.hbase.HbaseTemplate"> <beans:property name="configuration" ref="hbaseConfiguration" /> </beans:bean>

- 23. HBASE Client w/ Spring Data HBaseTemplate

![ Hello World Using Spring for Apache Hadoop

public static class IntSumReducer extends Reducer<Text,IntWritable,Text,IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values, Context context)

throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

public class Main {

private static final String[] CONFIGS = new String[] {"META-INF/spring/hadoop-

context.xml" };

public static void main(String[] args) {

String[] res = (args != null && args.length > 0 ? args : CONFIGS);

AbstractApplicationContext ctx = new ClassPathXmlApplicationContext(res);

// shut down the context cleanly along with the VM

ctx.registerShutdownHook();

}

}](https://image.slidesharecdn.com/springdataiii-121211022542-phpapp02/85/Spring-data-iii-4-320.jpg)